How can I delay play mode environment continuously?

Hey I know it is strange. But can we do something like, delay the play mode environment by some time delay.(say 500msec)

I am simulating a car, which is a sending a realtime windscreen video to a control room. Because of network, a delay is happening say 0.5 sec. I want to see what control room will be seeing.

Answer by Hellium · May 16, 2018 at 09:33 PM

Hello @JeyP4,

I've quickly reworked the code I provided in the answer I gave on StackOverflow, but I haven't profilled the two solutions, so I don't know if you will have better performances.

Here is a new reworked version using an array, a custom struct to save the captured Texture + the time the texture has been captured at, and two indices to manage the captured frame and the rendered frame

using UnityEngine;

using System.Collections;

public class DelayedCamera : MonoBehaviour

{

public struct Frame

{

/// <summary>

/// The texture representing the frame

/// </summary>

private Texture2D texture;

/// <summary>

/// The time (in seconds) the frame has been captured at

/// </summary>

private float recordedTime;

/// <summary>

/// Captures a new frame using the given render texture

/// </summary>

/// <param name="renderTexture">The render texture this frame must be captured from</param>

public void CaptureFrom( RenderTexture renderTexture )

{

RenderTexture.active = renderTexture;

// Create a new texture if none have been created yet in the given array index

if ( texture == null )

texture = new Texture2D( renderTexture.width, renderTexture.height );

// Save what the camera sees into the texture

texture.ReadPixels( new Rect( 0, 0, renderTexture.width, renderTexture.height ), 0, 0 );

texture.Apply();

recordedTime = Time.time;

RenderTexture.active = null;

}

/// <summary>

/// Indicates whether the frame has been captured before the given time

/// </summary>

/// <param name="time">The time</param>

/// <returns><c>true</c> if the frame has been captured before the given time, <c>false</c> otherwise</returns>

public bool CapturedBefore( float time )

{

return recordedTime < time;

}

/// <summary>

/// Operator to convert the frame to a texture

/// </summary>

/// <param name="frame"></param>

public static implicit operator Texture2D( Frame frame ) { return frame.texture; }

}

/// <summary>

/// The camera used to capture the frames

/// </summary>

[SerializeField]

private Camera renderCamera;

/// <summary>

/// The delay

/// </summary>

[SerializeField]

private float delay;

/// <summary>

/// The size of the buffer containing the recorded images

/// </summary>

private int bufferSize = 256;

/// <summary>

/// The render texture used to record what the camera sees

/// </summary>

private RenderTexture renderTexture;

/// <summary>

/// The recorded frames

/// </summary>

private Frame[] frames;

/// <summary>

/// The index of the captured texture

/// </summary>

private int capturedFrameIndex;

/// <summary>

/// The index of the rendered texture

/// </summary>

private int renderedFrameIndex;

/// <summary>

/// The frame index

/// </summary>

private int frameIndex;

private void Awake()

{

frames = new Frame[bufferSize];

// Change the depth value from 24 to 16 may improve performances. And try to specify an image format with better compression.

renderTexture = new RenderTexture( Screen.width, Screen.height, 24 );

renderCamera.targetTexture = renderTexture;

StartCoroutine( Render() );

}

/// <summary>

/// Makes the camera render with a delay

/// </summary>

/// <returns></returns>

private IEnumerator Render()

{

WaitForEndOfFrame wait = new WaitForEndOfFrame();

while ( true )

{

yield return wait;

capturedFrameIndex = frameIndex % bufferSize;

frames[capturedFrameIndex].CaptureFrom( renderTexture );

// Find the index of the frame to render

// The foor loop is **voluntary** empty

for ( ; frames[renderedFrameIndex].CapturedBefore( Time.time - delay ) ; renderedFrameIndex = ( renderedFrameIndex + 1 ) % bufferSize ) ;

Graphics.Blit( frames[renderedFrameIndex], null as RenderTexture );

frameIndex++;

}

}

}

Original answer

You can play with the size of the buffer to make the delay smaller or bigger, but there is no "notion of time", so an efficient computer will have a smaller delay than a slower one.

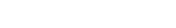

Here, I use an array of Textures with a fixed length. In the image below, the grey cells means the cell does not (yet) contain a Texture2D. When the cell is in dark blue, it means the texture has just been recorded. When the cells is in light blue, it means, it has been recorded some frames before.

By maintaining an index to know which texture to replace / instantiate, I know the newest recorded texture and the oldest, which is the next one in the array.

using UnityEngine;

using System.Collections;

public class DelayedCamera : MonoBehaviour

{

/// <summary>

/// The camera used to record the scene

/// </summary>

[SerializeField]

private Camera renderCamera;

/// <summary>

/// The size of the buffer containing the recorded images

/// </summary>

[SerializeField]

private int bufferSize = 16;

/// <summary>

/// The render texture used to record what the camera sees

/// </summary>

private RenderTexture renderTexture;

/// <summary>

/// The recorded frames

/// </summary>

private Texture2D[] frames;

/// <summary>

/// The captured texture index

/// </summary>

private int capturedFrameIndex;

/// <summary>

/// The frame index

/// </summary>

private int frameIndex;

private void Awake()

{

frames = new Texture2D[bufferSize];

// Change the depth value from 24 to 16 may improve performances. And try to specify an image format with better compression.

renderTexture = new RenderTexture( Screen.width, Screen.height, 24 );

renderCamera.targetTexture = renderTexture;

StartCoroutine( Render() );

}

private IEnumerator Render()

{

WaitForEndOfFrame wait = new WaitForEndOfFrame();

while ( true )

{

yield return wait;

capturedFrameIndex = frameIndex % bufferSize;

// Create new texture of the current frame

RenderTexture.active = renderTexture;

// Create a new texture if none have been created yet at the given array index

if ( frames[capturedFrameIndex] == null )

frames[capturedFrameIndex] = new Texture2D( renderTexture.width, renderTexture.height );

// Save what the camera sees into the texture

frames[capturedFrameIndex].ReadPixels( new Rect( 0, 0, renderTexture.width, renderTexture.height ), 0, 0 );

frames[capturedFrameIndex].Apply();

RenderTexture.active = null;

// Render the oldest recorded frame

if ( frames[( capturedFrameIndex + 1) % bufferSize] != null )

{

Graphics.Blit( frames[( capturedFrameIndex + 1 ) % bufferSize], null as RenderTexture );

}

frameIndex++;

}

}

}

First of all Thnx for such a quick reply and this efficient code. Wow, this is highly smooth....superb.

I am facing just two problems with this code

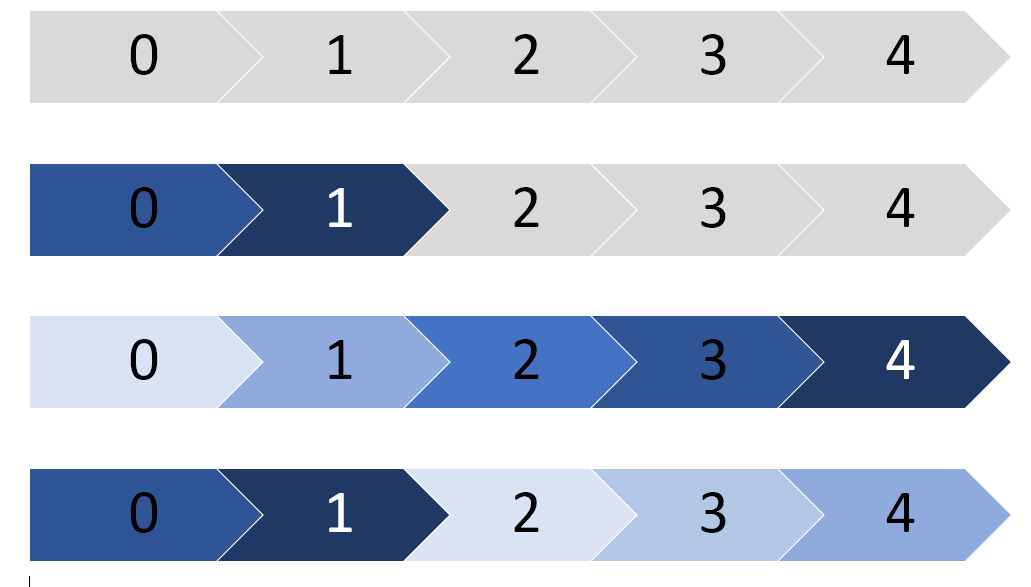

All the time it shows "No camera Rendering". See attached image

.

.As there is some movement and calculation in the scene during play, the frame rate flickers from 35 to 60. So giving buffer some value is not effective. Can something be done with 'Time.deltatime' to record the time used in past frames and calculate the buffer size automatically.

I've reworked my solution to manage a real delay.

You can disable the warning by clicking on the little menu at the top right of the Game tab and unchecking Warn if no camera rendering

Hey @Hellium Very good work. Thank you so much. It is working perfectly. :D

Glad it works fine and smoothly for you. Thanks for the feedback, I will update my answer on StackOverflow ! ;D

Answer by naomi_k · Mar 02, 2020 at 02:30 PM

Does anyone know how to implement this code with a VR headset (Oculus rift s)?

Your answer

Follow this Question

Related Questions

Best way to display maps? 0 Answers

How to put a display in the display list? 0 Answers

Make FixedUpdate camera be static like in Update? 0 Answers

Camera Doesn't Show Game In Build 0 Answers