Optimising rendering of a large maze mesh - batching issues etc (updated)

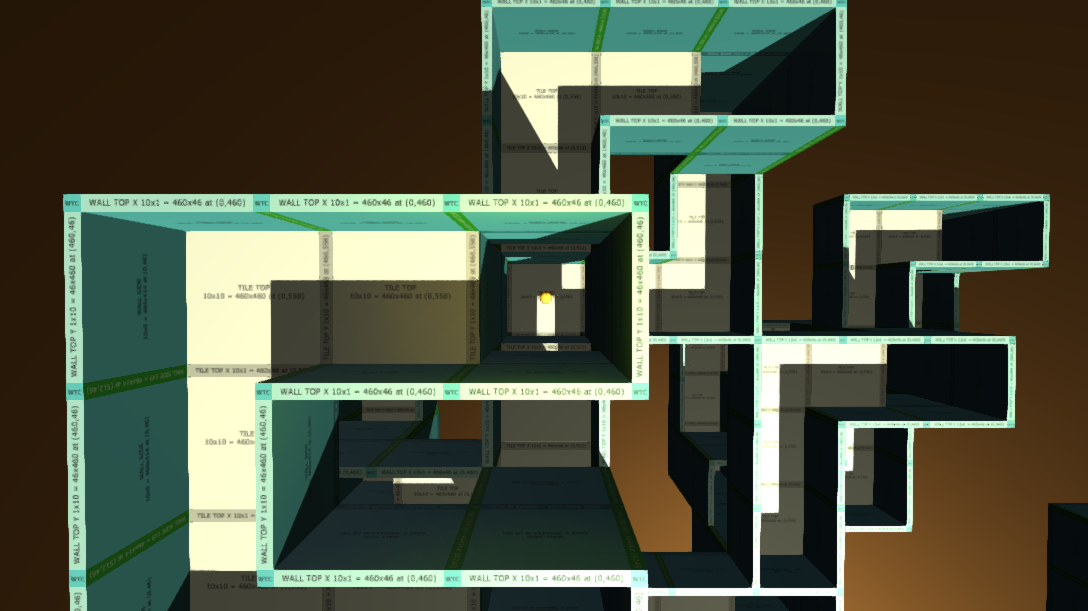

I've created a 3D maze which has multiple floors and shafts linking floors together. The main camera always looks down on the maze from above and is positioned a fixed distance directly above the player character. There is one directional light casting shadows on the maze, one directional back light (no shadows) and one point light attached to the player character (no shadows).

The maze mesh is generated at runtime and uses a texture atlas with different regions for floor tile tops, wall sides, wall tops, etc. The mesh material uses the standard shader in cut out mode. I also generate a sub mesh for some floor tiles in special cases.

The mesh is potentially very large - tens or even hundreds of thousands of vertices - but most of the time only a fraction of the maze is visible to the camera. So I feel some kind of optimisation is necessary.

Q. But as a relative newcomer to Unity and with a limited understanding of the rendering pipeline, I am unsure as to what the best optimisation strategy is. Any thoughts would be most welcome.

As it appears there is no frustum culling taking place with a single large mesh, I have tried to split the mesh up into sections - each section corresponding to a part of one floor. The area covered by each section is configurable. But my tests (outlined below) did not seem to show any definite performance improvement. I wonder if this is because batching is trying to recombine the meshes I have deliberately split up which upon reflection seems like wasted effort. Having said that this could be worth it as it seems to leave out parts of the maze that are completely outside the camera frustum. I think it would be better though if I get static batching to work, then I could use larger mesh sections.

Here is what I've tried so far...

TEST 1 - Initially my algorithm created one single mesh. I used a moderately sized maze with a vertex count of 11,152 and sub mesh vertex count of 28. Game tab maximised to near 1080p. Static and dynamic batching enabled in player settings. The DirectX 9 API is used.

Stats window: 70 - 77 fps, 19-25 batches, 0 saved by batching, 80-130k vertices (strangely increasing the less of the mesh was in view)

Profiler: Camera.Render 0.3-0.85 ms (mostly around 0.35)

Frame debugger: 27-29 draw calls

TEST 2 - As for test 1 but with static and dynamic batching disabled. Unity still seemed to be trying to batch with the batch count varying. No change at all to the results.

TEST 3 - Split maze mesh into floor sections as described above. Used quite a small section area to try and keep the vertex count below the 900 limit for dynamic batching - static batching did not occur even though the mesh section game objects were set to static at runtime. I set the mesh renderer shared material property in an attempt to induce batching. Same level design, resolution and batching settings as Test 1.

Stats window: 67 - 75 fps, 80-150 batches, 15-23 saved by batching, 40-60k vertices

Profiler: Camera.Render 0.4-0.7 ms (mostly around 0.45)

Frame debugger: 110-127 draw calls, dynamic batches used

TEST 4 - As for test 3 but with static and dynamic batching disabled. Much lower frame rate of around 35-45.

============================================

UPDATE: new tests

TEST 5 - Single mesh of a larger test maze with a vertex count of 61,856. Profiled a development build running at 1080p full screen using the Fantastic quality setting (tweaked the shadow settings):

Camera.Render: 0.28-0.4 ms

Vertices: 814 K

Draw calls: 24

Static/dynamic batches: 0 / 0

Satatic/dynamic batched draw calls: 0 / 0

Max shadow casters: 6

Max used memory total: 188 MB

GPU usage: 4.8%

TEST 6 - Same maze as test 5 but using mesh sections. Static batching now working thanks to StaticBatchingUtility.Combine().

Camera.Render: 0.4-0.55 ms

Vertices: 65-125 K

Draw calls: 180-310

Static/dynamic batches: 10-16 / 0

Satatic/dynamic batched draw calls: 150-225 / 0

Max shadow casters: 185

Max used memory total: 188 MB

GPU usage: 4.3%

Current conclusion - I've been having a nightmare trying to interpret the erratic profiler output. Intuition would suggest that there should be a noticeable performance difference between using and not using sections with static batching. However this does not seem to be the case.

Perhaps this is because my idea of a large mesh is really not that big and so any difference in the relatively small render times is not big either. For now I will probably stick with my gut feeling that using static batching is the way to go. But if anyone has any more thoughts on what is theoretically best, I would very much appreciate it.

============================================

NOTE - I am running Unity 5.3.2 Free on Windows 7 Pro on a laptop with 16GB of RAM and an nVidia GTX 675M GPU. I also want to target OSX and Linux, and possibly eventually consoles and tablets.

Also note that during testing I found that the data in the Game stats window and Profiler to vary significantly, even when I had not changed the scene in any way. But I've tried to quote representative statistics.

When you assign materials to the various sections are you assigning them as the shared$$anonymous$$aterial? This will help tremendously. I'd suggest the smaller sections. To get static batching to work you'll need to make your objects the child of another object and then call StaticBatchingUtility.Combine(GameObject parent) on the parent object. With the smaller sections Unity should be culling automatically. If not you can try writing your own culling algorithm that disables/enables the non-visible objects. There's also a few tools on the asset store for this. Beyond that you just need to observe the profiler results and tinker.

Naphier, thank you so much for your comment. I made my question pretty long because so many times I see comments asking for more detail. But I feared I had overdone things and that nobody would bother to read it!

Anyway, yes I do indeed set shared$$anonymous$$aterial (actually shared$$anonymous$$aterials for the sub mesh material too). By the way the docs for the material property state: "This function automatically instantiates the materials and makes them unique to this renderer. It is your responsibility to destroy the materials when the game object is being destroyed..." I guess manual cleanup is unnecessary when setting shared$$anonymous$$aterial because there is no material copying going on.

I found that StaticBatchingUtility.Combine(GameObject parent) had no effect. I wondered if this was because a small portion of the maze was in a submesh or whether it was because I was using 3 materials: one for sections without a submesh, one for the main material of sections with a submesh and one for the submesh (but all 3 materials have the same texture atlas). Getting rid of the submesh and using just one material made no difference.

If I used StaticBatchingUtility.Combine(GameObject[] gos, GameObject parent) however, static batching took place - why should this make a difference?

But as before, even with static batching, profiling did not conclusively show the use of mesh sections to improve performance. I will soon update my original question to show the new test results.

I'm pretty sure when you combine you need to have the same material. Sometimes static batching doesn't seem to do a lot. Also, the last time I checked the profiler was reporting static batch numbers wrong. It was adding the static total batches # to the draw calls and not the 'batched draw calls'. Not sure if they fixed that yet.

I'm pretty sure when you combine you need to have the same material.

That's what I thought but maybe I'm getting away with 3 materials as they all use the same texture. Or maybe batching is only working with sections that only use one material - though looking at the combined mesh in the editor, the whole maze appears to be included.

Have you looked at my latest test results yet? - added to the end of my original question. It doesn't seem to me that static batching has improved things much but maybe it would if the maze was even larger...

Your answer