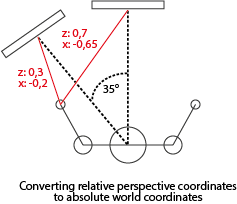

Combining local coordinates into world coordinates.

Hey there!

I'm running into problems when I want to combine two sets of local X,Y,Z coordinates. These coordinates come from Kinect readings, where the Kinect is the, (0,0,0), center point. The camera's focal point will be the null line. Meaning, everything left would be X-, right X+. The same counts for the Y space. Z is the depth vector in this case.

The Kinects are tilted forward at a ~15° angle. I automatically calculate this angle based on the floor X,Y,Z,W that is returned by the Kinect:

When I start the simulations; The current user angle will be determined based on a straight line between the shoulders. Ofcourse this value is seen from the camera's perspective:

I figured that knowing these two values for each camera would be enough to 'normalize' the coordinates, so that the values can be averaged. But nothing I've tried helped me out. I'm really lost in the math right now. I also know the X,Y,Z coordinates for each Kinect relative to the body and I know the height at which the Kinect is located.

Example at 35° angle:

I'm really terrible at math. But I would like for the person being the (0,0,0), then I want to transform the coordinates from local(rotated) space to world space. That way I can compare and average the data. Is there anyone of you that could supply me with code examples on how to do that efficiently? Thanks a bunch!

Your answer