Unity doesn't recognize compute shader

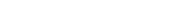

Hi, everyone! I'm brand new to writing compute shaders in Unity, and I seem to have issues very early off. When I try to use any of the ComputeShader functions defined by Unity, it throws an error.

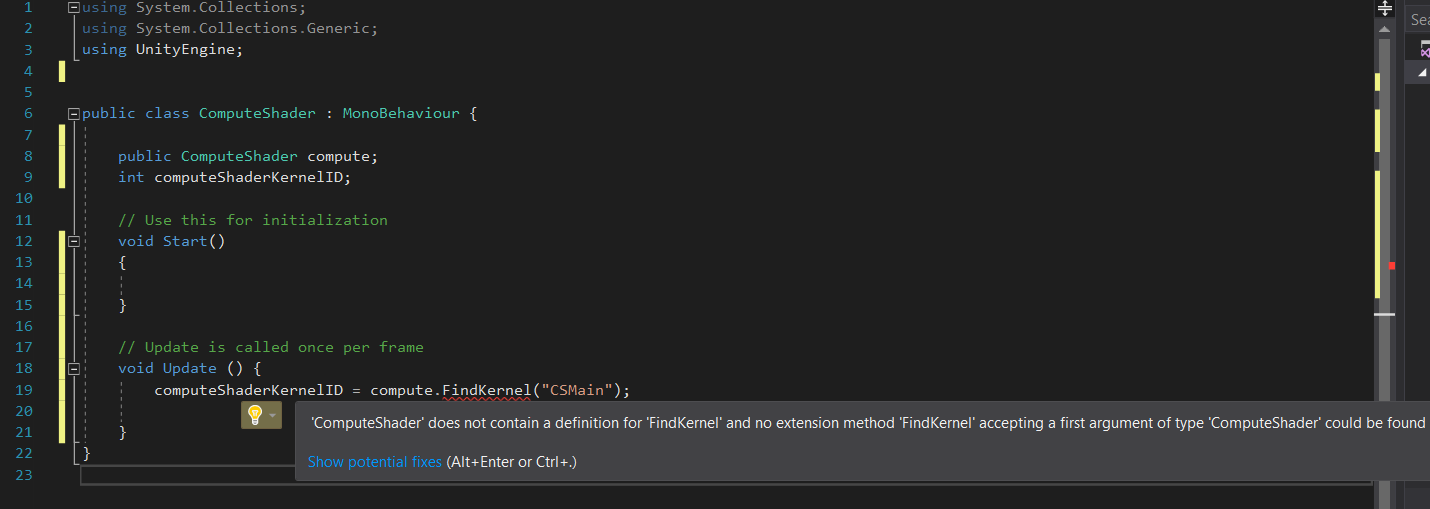

Also, when I generate the default compute shader and try to click and drag the shader onto the public ComputeShader variable to attach it to my very simple script, it simply won't do it. It recognizes it as a type "Compute shader" since it populates in the window of suggested objects to attach, but it does not actually attach the shader when I click on it, no matter what I do.

I tried updating to Unity 2017.2 and Visual Studio 2017, and I've had no issues with Unlit shaders. I also set the device target to HoloLens which runs DX11. I've gotten errors (silly ones that I resolved) that suggest it is using DX11, so compute shaders should be supported. The computer I'm developing on runs DX12.

Has anyone seen this before? I'm very sorry if this is just "user error".

Answer by Bunny83 · Nov 10, 2017 at 02:25 AM

You are the second one today with the same mistake. You named your own class ComputeShader. Rename your class and file.

Holy cow. You're right. That fixes everything. Thank you so much!

Your answer

Follow this Question

Related Questions

ComputeBuffer low-level access in Direct3D 11 in Unity 5.5.0B3 1 Answer

DrawProcedural draws nothing although buffers are assigned properly 1 Answer

ComputeShader buffer, change count at runtime 1 Answer

Performance of looping compute shader in Update() 2 Answers

Error when selecting shader. Unity 5.6.1f1, SF: 1.35 0 Answers