- Home /

Depth buffer range is 0.5 -0 instead of 1.0 - 0 as expected

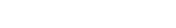

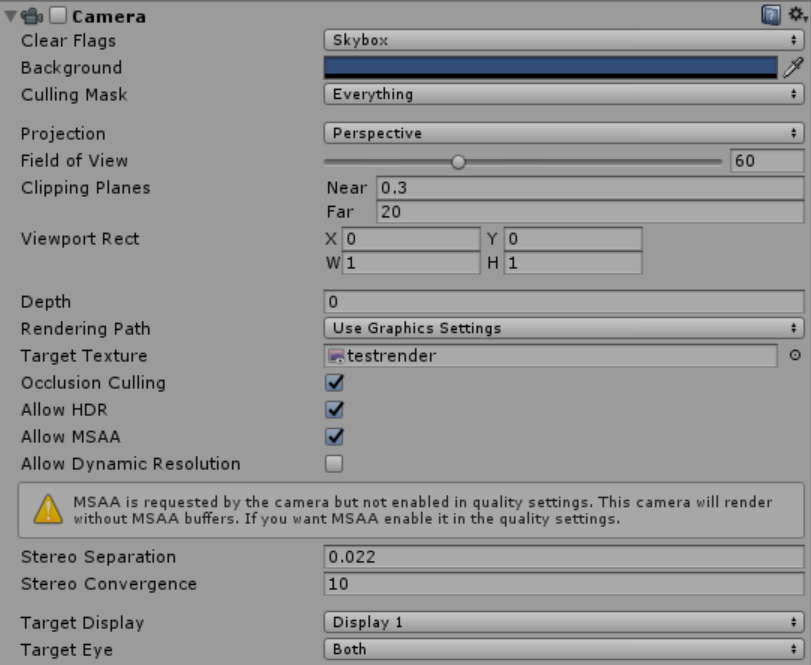

I'm rendering to a depth buffer by using a standard camera and a render texture that has its format set to Depth only. I do this by calling camera.Render(); when clicking a button using a custom editor script. Near and far clipping planes are set to unrelated values (0.3 and 20, see below). Should note that the shader used to evaluate the texture doesn't specify any offset.

The values read from the depth buffer (in another shader that uses the texture) are weird: objects near the far clipping plane show values almost at 0, and values at the near clipping plane show values of 0.5.

When I compute the pixel depth manually (by constructing the cameras MVP matrix, multiplying with the vertex and dividing by w in a pixel shader), I can reproduce the expected values instead (going from 1.0 to 0.0).

The ratio seems to hold for any arbitrary value in the depth texture, too. I.e. when i read a pixel at depth 0.25 in the depth texture, the corresponding depth value from manual computation ends up as 0.5, though with certain values it's not an exact match (the factor will be slightly off from 2.0), which I assume is just due to floating point accuracy though I could be wrong there.

Any ideas what could be causing this, and how i can deal with it? It seems I can just multiply the depth from texture by 2.0, but this seems hacky and won't work on other machines if some underlying platform specific code is the cause of this.

For completeness, screenshots showing camera and render texture settings:

(spacing)

Every time I find a question Im interested in - it has no answer :(

Your answer

Follow this Question

Related Questions

Depth Rendering Issue 0 Answers

Is it possible to create a depth render of scene using a replacement shader? 0 Answers

commandbuffer can't get depth buffer in forward rendering but correct in Deferred .Why? 1 Answer

Modifying shadow depth pass 0 Answers

Multiple cameras, view distances, and the depth buffer 1 Answer