- Home /

Speeding up reading from a RenderTexture

I'm trying to read from a render texture every frame. In my scene, I have a camera that's rendering to a RenderTexture. I need to determine the value of a pixel at a known location in that image.

I tried setting up some code to read a pixel out of the render texture into a 1x1 Texture2D, like so:

Texture2D texture = new Texture2D(1,1);

Rect rect = new Rect(Mathf.FloorToInt(pixel.x), Mathf.FloorToInt(pixel.y), 1, 1);

testTexture.ReadPixels(rect, 0, 0);

//testTexture.Apply();

Color32 pixelSample = testTexture.GetPixel(0,0);

This code, specifically ReadPixels(), is taking 3-5 ms each frame on the GPU. That's actually quite a big deal for us, and I'm trying to figure out why it's so large.

The code is copying from the GPU to RAM, so I get that there's going to be a delay, but this seems large. I'm wondering if I'm blocking execution of Unity's main thread or doing something else to gum up the works.

I'm calling this code from OnRenderImage - I've also tried Update() with no apparent change. Is there a specific point in the rendering I should be doing this, or perhaps a different way of going about it that's faster?

Is there any other way to speed up the read of a single pixel from a RenderTexture? FYI, this has to work in the web player, so I can't use texture pointers.

(P.S.: I don't believe it's possible to get information directly out of a shader, at least pre-DX11, but if I'm wrong that's another avenue I could try)

Have you used this function without problems before?

Try changing the resolution drastically lower, just to see if there is a change in performance.

If you see an increase in performance the smaller resolution gets, it's quite likely that ReadPixels is pulling the entire screen from GPU to CPU, then filling the texture.

If this is the case, the performance of reading only 1 or 100 pixel won't matter as much as the size of screen resolution, and using your current method way might be out of the question if you mean to preserve your resolution.

Thanks @jbarba,

I haven't done anything like this any faster way before.

Changing the resolution of the render texture definitely has an effect on the GPU time. However, it appears to be non-linear - anything below 256x256 is around 1.5 ms, raising to 1024x1024 raises it to 3-4 ms, and going to 2048x248 raises it to 10-12 ms.

One interesting thing is that in the Profiler, the time is all listed under RenderTexture.SetActive. So I was hoping that if I could do this somewhere in the pipeline where the RenderTexture is already active, it wouldn't be so disruptive.

Answer by Dave-Carlile · May 29, 2013 at 11:58 PM

When you render to the texture, then try to read it back immediately, you're causing a pipeline stall. The GPU has to finish rendering all commands it has been given up to the "read pixels" call, then send that data back to the CPU. Pipeline stalls kill GPU performance.

Shawn Hargreave's of XNA fame has a great explanation of what's going on.

One way to deal with this is to render to the texture, then wait a frame or two before you attempt to copy the data back to the CPU. This gives the pipeline a chance to complete all of the commands normally without having to stall to fulfill your getdata request, at the cost of your needing to use slightly old data.

Another way to handle this is to figure out a way using shaders so you don't ever have to pull the data out of GPU memory. Do you actually need to call GetPixels?

Thanks Dave!

Interesting idea - I could look into waiting a frame or two, and compare the GPU time. I'll definitely check out the blog post you linked. I'm wading neck deep into GPU stuff and I'm definitely a newbie in that arena, so I appreciate all the ideas you can give me :)

As for whether I have to pull it out of GPU memory, the answer is yes, and the why will require a bit more explanation:

I'm rendering the scene with a main camera and a second camera with shader replacement. The shader replacement renders everything in such a way that the output color of the object corresponds to an object ID. That way, I can tell what the user's mouse is currently hovering over. I potentially have 10s of thousands of 'objects' within a scene, for example a mesh containing a few thousand sprites, so trying to deter$$anonymous$$e mouse hover with things like colliders and raytracing is just out of the question.

So yeah, I need to pull the value back to the CPU somehow so I can use it in code.

If you like reading, here's the discussion that spawned this idea:

http://answers.unity3d.com/questions/448653/raytracing-through-an-octree.html

I've not done that exact thing, but I have had a situation (when using XNA) where I needed to pull data back from the GPU. A combination of waiting a couple of frames, and only rendering to the texture after I'd retrieved the previous data (i.e. only updating the render target while not already waiting for a previous render), took care of most performance issues for me.

In my tests it doesn't seem to make any difference how long I wait to read the RenderTexture. I have a disabled camera and the code below, and all that happens is I get a spike every couple of frames rather than consistently high rendering times. Do you remember exactly what it was you were doing in your earlier project that would be different?

private IEnumerator renderScreen()

{

while (true)

{

yield return new WaitForEndOfFrame();

camera.Render();

yield return null;

yield return null;

RenderTexture prev = RenderTexture.active;

RenderTexture.active = tex;

output.ReadPixels(readFrom, 0, 0);

RenderTexture.active = prev;

yield return null;

}

}

(Notes: this is attached to the disabled camera's GO. tex is camera.targetTexture. ReadFrom is a rect, and output is a Texture2D.)

Have you tried waiting more frames - just as an experiment? $$anonymous$$y render texture was 256x256 as well, so that makes a difference...

I did try adding more yields, and it only moved the spikes further apart - it didn't reduce them at all.

I've been playing with it further, trying to get the resultant texture displayed in the GUI, and I think I've come across a showstopper Unity bug:

Works:

Apply shader replacement to camera

Camera is on and renders every frame to render texture

Display render texture in GUI

From that, I correctly see the render texture with the shader replacement

Doesn't work:

Apply shader replacement to camera

Camera is off, and a coroutine does a camera.Render() every frame

Display render texture in GUI

From that I only see some of the scene rendered with the replacement shader. $$anonymous$$y code is pretty super simple:

private IEnumerator testing()

{

while (true)

{

camera.Render();

//camera.RenderWithShader(replacementShader, "RenderType");

yield return null;

}

}

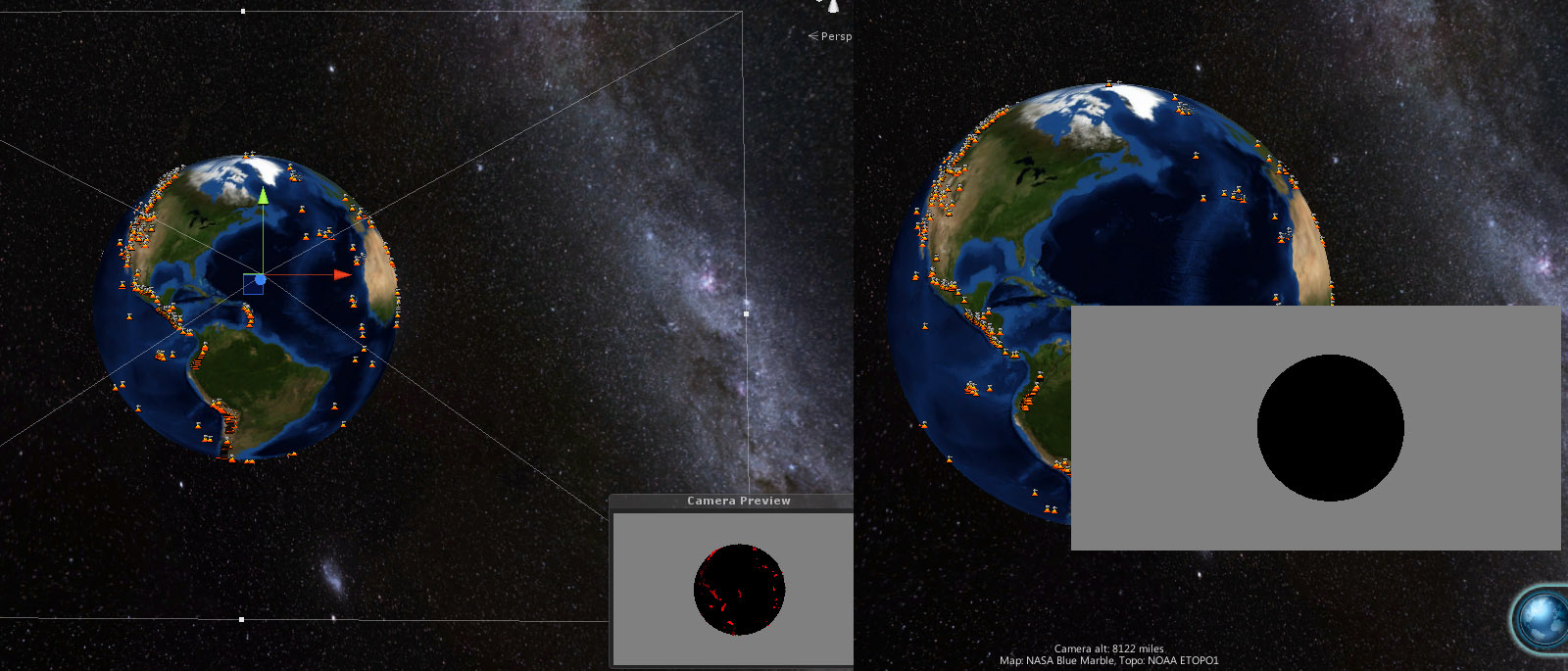

As you can see, I've tried using both Render() and RenderWithShader, and both show this abnormal behaviour. Below is a comparison between whant I see in the scene view vs what I see displayed in the GUI. It's pretty obvious that what the camera is supposed to be seeing (camera preview) does not match what's in the render texture (right side).

If I can't get information out of the render texture when using replacement shaders without having to render every frame, then the suggestions you've given me probably aren't going to work. I could try enabling and disabling the camera component, but I'm not sure how many more hours I want to throw at this.

Answer by Kibsgaard · Feb 22, 2014 at 11:54 PM

EDIT 2: From Unity 5.2+, you can no longer call glReadPixels from the main thread and expect the correct RenderTexture/FrameBuffer to be active. You have to move the call to a Low-level native plugin and bind the framebuffer manually in the plugin before reading the pixels back. For more information on this, see the newest comments to this answer.

EDIT: (Only works for Unity 5.1 and older) All of the below is still correct, but an easier way to implement it, is to just include the OpenGL library directly in C# (no need for building an extra C++ DLL).

[DllImport("opengl32")]

public static extern void glReadPixels (int x, int y, int width, int height, int format, int type, IntPtr buffer);

This might be a little late, but could be useful for someone else.

If the script is attached to the camera which renders to the render texture, you can put your ReadPixels() into

void OnPostRender () {}

Then the required RenderTexture is already active and you don't need to spend time changing it.

Because Unity probably wants a copy of all textures in CPU memory, ReadPixels can be very slow - especially when dealing with large textures. To get around this, the only solution I could find was to run Unity in OpenGL-mode (add "-force-opengl" to the target path of Unity's shortcut) and then write a plugin (DLL) which calls the OpenGL-function glReadPixels. With that function it is possible to transfer directly from the currently active frame buffer (RenderTexture) to CPU memory. With this solution it reduces the amount of time it takes to fetch a 1920x1080 RenderTexture to the CPU memory from 54ms to 12ms.

The C/C++ DLL source (place glext.h in the source folder):

#include "stdafx.h"

#include <gl\GL.h>

#include <gl\GLU.h>

#include <stdlib.h>

#include "glext.h"

#pragma comment(lib, "opengl32.lib")

using namespace std;

extern "C" __declspec(dllexport) int GetPixels(void* buffer, int x, int y, int width, int height) {

if (glGetError())

return -1;

glReadPixels(x, y, width, height, GL_BGRA, GL_UNSIGNED_INT_8_8_8_8_REV, buffer);

if (glGetError())

return -2;

return 0;

}

Add the function to Unity (C#) like this (place the DLL in Assets\Plugins\`): [DllImport ("NameOfYourDLL")] private static extern int GetPixels(IntPtr buffer, int x, int y, int width, int height); And use it like any other function. The IntPtr buffer` is a pointer to where you want to store your image. I get the pointer from another DLL - you could also get it by

unsafe{

fixed(SomeClass* ptr = &someClass)

{

IntPtr buffer = (IntPtr)ptr;

GetPixels (buffer, ...);

}

}

However, that requires you to allow unsafe code. Alternativly you could probably use some Marshal-function to get a pointer to a byte array.

I am using this to fetch two full-HD renders each frame at ~24 fps and then keying those onto a 3D-SDI video stream using two Decklink hardware-keyers. The bottleneck is the fetching of data from the GPU as it stalls the rendering pipeline. If anyone finds a faster (real-time) solution for this, please let us know =)

This sounds as a really good solution. Did you stumbled around this thread: http://forum.unity3d.com/threads/198568-Epic-Radial-Blur-Effect-for-Unity-Indie ? He created an image effect solution for Unity Indie. I think you could be of great help to him.

Cheers 0tacun

Does the call point of your GetPixels() method make a difference? I'm assu$$anonymous$$g that the glReadPixels() call needs to happen on the right thread in the right ti$$anonymous$$g window, and that Unity has most of the control over this.

Also, have you tried this in builds for any other platforms? OS X? iOS? Android?

@slippdouglas: It makes a difference in that you don't have to tell the GPU to switch render target (RenderTexture.active = rt). I have not tried it on other platforms, but should work (maybe the plugin has to be compiled as something other than a dll on some platforms). For iOS take a look at this: http://stackoverflow.com/questions/9550297/faster-alternative-to-glreadpixels-in-iphone-opengl-es-2-0

Is there an existing implementation of this available? I'm new to glReadPixels and I'm trying to generate the 3d world position for each pixel

Answer by salvabervi · Oct 03, 2016 at 05:59 AM

@martijn-vegas hello, have u managed to solve the problem? I only get a picture entirely in black with your own code and I've checked and everything. I really need your help, Could u help me? Thanks in advance!

Your answer