- Home /

.wav or .mp3 for audioclips?

I know there are already some questions about this points in this forum, but I can't find a good complete response. Basically, I have several sounds for my games, and I have the sound in any format (wav, mp3...), so which one should I use?

Answer by MakinStuffLookGood · Jan 05, 2015 at 09:35 PM

Typically WAVs are used for small sounds files (sound effects) and MP3s are used for longer, streamed files (background music).

Reasoning is that the WAVs will be stored in memory and there's little runtime overhead to decode them when you want play them (bullet sfx that 100s of times throughout the game). MP3s for a a background song on the other hand could be 3 MB, and the uncompressed wav file of that same file could be >10 MB. Not something you want sitting around in memory, so it's better to leave them compressed, and use stream from disc. Little overhead when you're just doing this for one music track.

Perfect answer, thank you. I can't still vote, but take my +1.

There's one slight little addition I would add onto this answer.

If you're either reading or editing the audio clip/sound in the game, it's highly advisable to use the WAV format and not the $$anonymous$$P3 format.

For example, when making a game where a music affects parts of the world, you should use WAV format instead of $$anonymous$$P3 even if it's for a music. Otherwise, when using $$anonymous$$P3, Unity will create an uncompressed clone of the music data to read the audio outputs in it. When using the WAV format, Unity doesn't create a clone of the data and, instead, use the RA$$anonymous$$ stored data of the file. (This is only happening when you try to read data out of the $$anonymous$$P3 file.) An common/well known example of this is if you have some lights show or enemies spawning based on the music's tones.

The same should be taken in consideration if you affect the music output in-game. For example, if you change the tones or style or speed of the music in-game (like toning down the music in an horror game when danger is close), you should also use WAV instead of $$anonymous$$P3 for the music. As soon as you manipulate the output of any bits in the $$anonymous$$P3, Unity stores it as RAW audio data while, with the WAV, Unity simply modify the RA$$anonymous$$ stored data of the WAV file itself.

Those are specific conditions, but I think they are important to specify never the less.

Answer by Kamuiyshirou · Jan 05, 2015 at 09:43 PM

1 - Any Audio File imported into Unity is available from scripts as an Audio Clip instance, which is effectively just a container for the audio data. The clips must be used in conjunction with Audio Sources and an Audio Listener in order to actually generate sound. When you attach your clip to an object in the game, it adds an Audio Source component to the object, which has Volume, Pitch and a numerous other properties. While a Source is playing, an Audio Listener can “hear” all sources within range, and the combination of those sources gives the sound that will actually be heard through the speakers. There can be only one Audio Listener in your scene, and this is usually attached to the Main Camera.

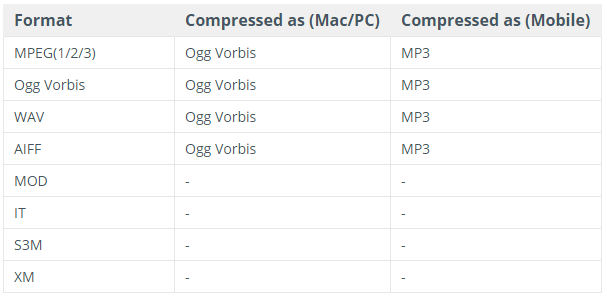

Supported Formats

Font: http://docs.unity3d.com/Manual/AudioFiles.html

2 - Create one Sound Controller:

using UnityEngine;

using System.Collections;

public enum soundsGame{

die,

toque,

menu,

point,

wing

}

public class SoundController : MonoBehaviour {

public AudioClip soundDie;

public AudioClip soundToque;

public AudioClip soundMenu;

public AudioClip soundPoint;

public AudioClip soundWing;

public static SoundController instance;

// Use this for initialization

void Start () {

instance = this;

}

public static void PlaySound(soundsGame currentSound){

switch(currentSound){

case soundsGame.die:{

instance.audio.PlayOneShot(instance.soundDie);

}

break;

case soundsGame.toque:{

instance.audio.PlayOneShot(instance.soundToque);}

break;

case soundsGame.menu:{

instance.audio.PlayOneShot(instance.soundMenu);

}

break;

case soundsGame.point:{

instance.audio.PlayOneShot(instance.soundPoint);

}

break;

case soundsGame.wing:{

instance.audio.PlayOneShot(instance.soundWing);

}

break;

}

}

}

3 - Create one GameObject(example: cube).

4 - Insert component audio source.

5 - Drag class SoundController to GameObject.

6 - Drag audio(.mp3 or .wav) to Script GameObject(Example: Sound toque).

That enum and switch case thing seems a bit ridiculous... you could just as easily use a Dictionary that uses the name of the clip as the key with the clip as the value. Then you wouldn't need an additional variable, enum declaration, and switch case for every new sound you add.

True, but dictionaries have a lot of overhead. Obviously, for a real project, you would not use a switch statement, but in most cases, a simple List<($$anonymous$$ey,V)> (<=64 items) or SortedList (<=1024 items) are a better choice. This is less relevant if you don't care about memory consumption or garbage. Caveat: SortedList is much worse than List for lookups and insertion primarily due to poor cache coherency and difficult to predict branching.

Thank you for the answer. I've read it and learn from it, althought it was a bit weird as answer, since it seems you are answering to another question, but still I learnt.

Your answer