- Home /

Multiple touch inputs operating at the same time on different parts of the screen

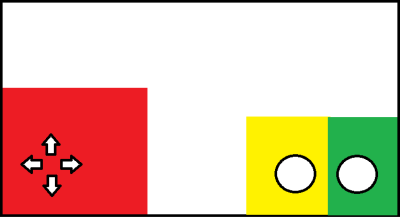

in the figure shown above, I have the layout of touch control that I want to use for my game. I am wondering how I would code so that these colored areas could be isolated as opposed to the screen taking generic touch inputs. How would I code for these sections independently, and as a group. For example, if I want to use both the yellow and green buttons, how would I reference when I'm hitting both areas at the same time, or if I use both separately timed out within a certain amount of time etc? The red section primarily would be used for 3D movement along the x and z axes. I plan to use the accelerometer to rotate along the Y-axis as well as to look up in the air and down to the floor.

I am hoping for either basic code, or to be pointed in the right direction where these issues have been discussed because I have not found appropriate knowledge on these issues.

Thank you for your help on this issue.

could you not use box colliders on each section. and use this. though you would still need to find out which the 3 hit coordinates and how you would make it work. but i made something similar to your work the same way

bool greenButtonHit = false;

Ray vRay = Camera.main.ScreenPointToRay (your touch coordinates here);

if (Physics.Raycast (vRay, out vHit, 200)) {

if (vHit.collider.name == "GreenBox") {

greenButtonHit = true;

}

}

@theUndeadEmo what you have here makes a lot of sense. Please understand that I am an amateur and do this as a hobby, so please excuse the next two questions because they are really bad.

1) arent box colliders and raycasts aspects of the in-game coding? I am talking about the user's ability to touch the screen and have different responses like jumping, blocking, and punching etc 2) what does the "200" refer to in the line: if (Physics.Raycast (vRay, out vHit, 200)) {

sorry, are you using OnGUI by any chance? (void OnGUI{//blablala}

my solution was for NGUI, using it for so long i took that as the normal UI type everyone uses :(

if your UI happens to be in 3D world space then my solution would work (ish) you still need to figure out which touches go where

the 200 on if (Physics.Raycast...) bit is how far it will cast a ray into the game world. so depending on how close your UI is to the camera, you might want to reduce that number or increase it.

@theUndeadEmo actually, I had been testing the game on my computer this entire time, so I hadn't even begun the process of testing on my phone yet. OnGUI definitely seems to be the way to go, but I was wondering what the equivalent would be for javascript as I really understand that much more than C#. Also, could you explain how the code references certain parts of the screen on phones and tablets etc. so that I could divide the screen space for touches versus drags...

thank you.

OnGUI is handy, but not recommended for mobile devices because it's somewhat heavy (OnGUI is called multiple times during a single frame). The old GUITexture is better for such devices (Unity's Joystick script uses GUITextures).

Answer by aldonaletto · Oct 02, 2013 at 12:44 AM

This can be done in multi-touch devices (which I suppose means 100% of the touch devices produced nowadays). A simple solution is to use GUITextures for each button, and use HitTest to check whether a touch occurred in the button area - for instance:

// drag the button textures to these fields:

public var guiUp: GUITexture;

public var guiDown: GUITexture;

public var guiLeft: GUITexture;

public var guiRight: GUITexture;

// button states:

var buttonUp: boolean;

var buttonDown: boolean;

var buttonLeft: boolean;

var buttonRight: boolean;

function ReadButtons(){ // check buttons

buttonUp = false;

buttonDown = false;

buttonLeft = false;

buttonRight = false;

var count: int = Input.touchCount;

for (var i=0; i<count; i++){ // verify all touches

var touch: Touch = Input.GetTouch(i);

// if touch inside some button GUI, set the corresponding value

if (guiUp.HitTest(touch.position)) buttonUp = true;

if (guiDown.HitTest(touch.position)) buttonDown = true;

if (guiLeft.HitTest(touch.position)) buttonLeft = true;

if (guiRight.HitTest(touch.position)) buttonRight = true;

}

}

function Update(){

ReadButtons();

if (buttonUp){

// button up is pressed

}

if (buttonLeft){

// button left is pressed

}

...

}

EDITED: You may also use a GUITexture for each area and define Rects in screen coordinates that specify the space occupied by each button:

// drag the GUITextures for each area here:

public var area1: GUITexture;

public var area2: GUITexture;

// define the Rect occupied by each button here:

public var rButUp: Rect;

public var rButDown: Rect;

public var rButLeft: Rect;

public var rButRight: Rect;

public var rButA: Rect;

public var rButB: Rect;

// button states:

var buttonUp: boolean;

var buttonDown: boolean;

var buttonLeft: boolean;

var buttonRight: boolean;

var buttonA: boolean;

var buttonB: boolean;

function ReadButtons(){ // check buttons

buttonUp = false;

buttonDown = false;

buttonLeft = false;

buttonRight = false;

buttonA = false;

buttonB = false;

var count: int = Input.touchCount;

for (var i=0; i<count; i++){ // verify all touches

var touch: Touch = Input.GetTouch(i);

// if touch inside some GUI, check the button rects:

if (area1.HitTest(touch.position)){

if (rButUp.Contains(touch.position) buttonUp = true;

if (rButDown.Contains(touch.position)) buttonDown = true;

if (rButLeft.Contains(touch.position)) buttonLeft = true;

if (rButRight.Contains(touch.position)) buttonRight = true;

}

if (area2.HitTest(touch.position)){

if (rButA.Contains(touch.position) buttonA = true;

if (rButB.Contains(touch.position)) buttonB = true;

}

}

...

@aldonaletto that all looks perfectly logical to me. $$anonymous$$y only question is how do I separate the area the areas? let's say I used the exact layout above, which, admittedly is a bit poorly constructed, how would I describe the bounds of each area on the screen??

Thank you for showing up. I always appreciate your input.

You may use separate GUITextures for each button with the script above. If you want to use a single GUITexture for several buttons, define Rects and use Rect.Contains to know which part of the GUITexture was hit - I'll edit my answer to show how to do it.

@aldonaletto Is there anyway to make it so that it every time you click the button the action only happens once ever click ins$$anonymous$$d of continuously?

So the scripts worked great, the first one.. How can I say when I tap the jump button, it only does a jump once and not continuously moving up?

`// drag the button textures to these fields: public var guiUp: GUITexture; public var guiDown: GUITexture; public var guiLeft: GUITexture; public var guiRight: GUITexture;

// button states: var buttonUp: boolean; var buttonDown: boolean; var buttonLeft: boolean; var buttonRight: boolean;

function ReadButtons(){ // check buttons buttonUp = false; buttonDown = false; buttonLeft = false; buttonRight = false; var count: int = Input.touchCount; for (var i=0; i

Your answer

Follow this Question

Related Questions

Touch input broken with multi-touch 1 Answer

touch controls help dragging a charater 0 Answers

KeyboardOrbit with DualTouchControls 1 Answer

Working with screen's touch limit 0 Answers