- Home /

What makes Camera.ScreenToWorldPoint return different results with same input?

Here is the problem, the code using camera.ScreenToWorldPoint() below returns different results. I have an empty scene with one game object and two scripts attached to it, default camera script and this TestInput class:

public class TestInput : MonoBehaviour {

public new Camera camera;

private void Update () {

var testPosition = new Vector3( 90, 300, 0 );

var worldPosition = camera.ScreenToWorldPoint ( testPosition );

Debug.Log( Time.time +

" screen: " + testPosition +

" world: " + worldPosition +

" camera.position: " + camera.transform.localPosition +

"" );

}

}

Results:

// 2.67702 screen: (90.0, 300.0, 0.0) world: (-5.3, 2.4, -10.0) camera.position: (0.0, 0.0, -10.0)

// 2.71742 screen: (90.0, 300.0, 0.0) world: (-6.4, -1.3, -10.0) camera.position: (0.0, 0.0, -10.0)

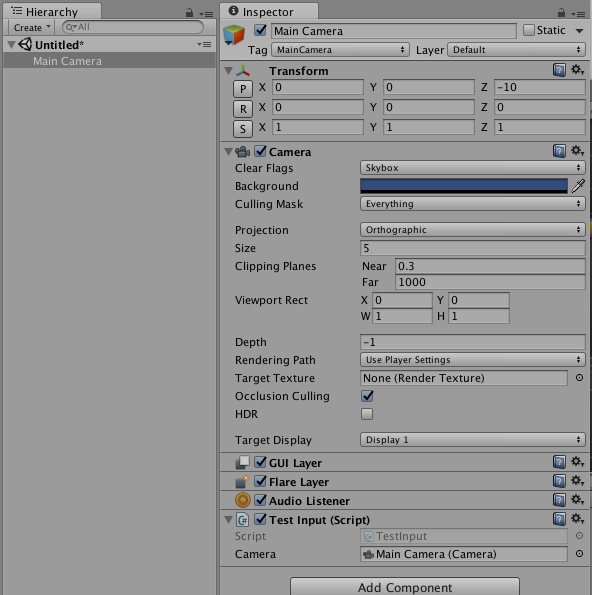

The first result is the correct value, the second one is appearing every 4-12 updates. Here is the scene:

What do you think could cause that problem?

Update: Here is how to reproduce this issue in a new project:

Create a new project;

Switch target to iOS;

Create new scene and add the script above;

In Game view select

iPhone Tall (2:3)or anything else without clearly set coordinates( not iPhone Tall 320:480);Observe the defect.

Created test condition on my PC, but did not observe issue: Constant result over thousands of iterations.

screen: (90.0, 300.0, 0.0) world: (-2.2, -0.1, -10.0) camera.position: (0.0, 1.0, -10.0)

UnityEngine.Debug:Log(Object)

TestInput:Update() (at Assets/TestInput.cs:14)

However, I did NOT see "iPhone Tall( 2:3) as an aspect ratio- so I created a new one 2x3 using "aspect ratio" and not "fixed resolution".

edit: I notice that if I CHANGE the aspect ration, WHILE "playing", I get a single result /frame with a different value. Closest I can get to replicating the issue. edit: Ah, didn't click "change platform": now I see the iphoneTall(2:3) option; but still get constant results. Hmm, I can also see the issue If I resize the unity editor window itself. (which allows for some system specific possible OS interference as a culprit...)

Right, when I switch the target to PC/$$anonymous$$ac/Standalone it does not happen, but when target is iOS, it does occur.

I think I just hit this problem in 2018.3.11. I'm trying to do a simple drag and drop operation. It works perfectly in editor, but when I deploy to a device (in my case Android, Samsung Galaxy S8) the behavior of the drag is as if the values are doubled or tripled, making the dragged object go much further than the finger movement on the screen.

I've been looking for possible causes for a few days, and the only thing I can think is it has something to do with the ppi (dpi) of the device (500 something) vs. the dev computer (72)... but I've used this method in other games without this issue, so I'm pretty stumped.

Your answer

Follow this Question

Related Questions

Main Camera XYPoint To Background Camera XYPoint 1 Answer

Camera.main.ScreenToWorldPoint return camera position 2 Answers

Screen to world point - parented camera 0 Answers

Rotated Camera fix for Screen to World point overshoots 0 Answers

Camera.main.ScreenToWorldPoint works within Unity editor, but not in builds 0 Answers