- Home /

How to merge Camera outputs/render-to-texture

Hi there,

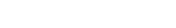

I'm creating an in-game omnidirectional camera of 270°. Since I presume Unity doesn't support it by default I created 27 cameras attached to each other where every camera has a FOV of 10°. When combined they form a 3/4 cilinder with a complete FOV of 270° as shown here on the screenshot. (don't mind the one rectangle camera on front, that is for testing only)

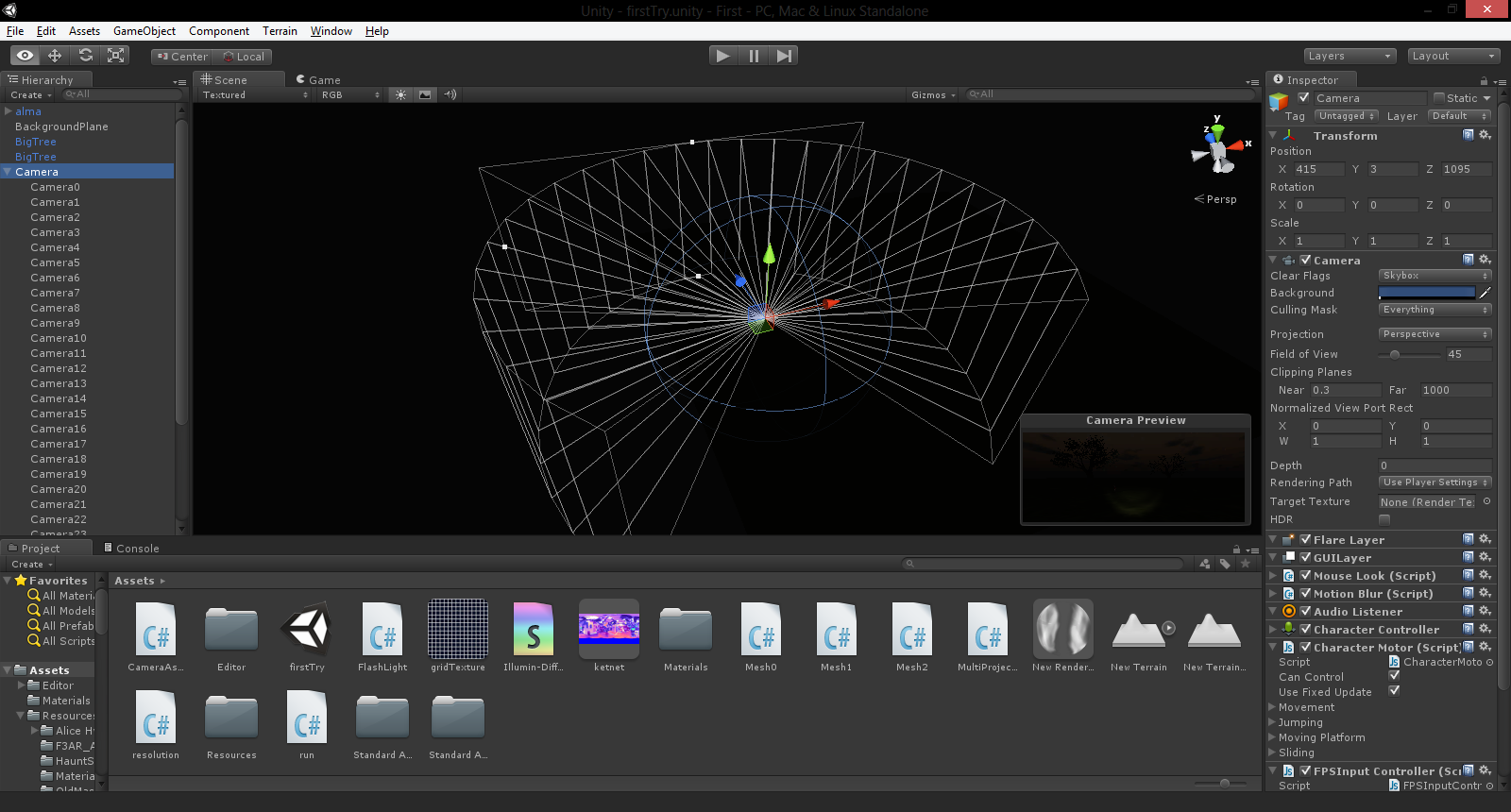

So now I have to create a render-to-texture of the whole thing where the output of all the small cameras are combined to one render-to-texture. So the question is, how can I do that? Eventually I have to put them on these meshes:

Any help?

Sorry for raising this post from the grave.

I am interested in knowing how you solved this in the end. For a current project, I need to combine the output of two cameras. Either by creating a texture twice as long as each camera width and putting the two outputs one next to each other (preferred), or by combining the two outputs with an alpha slider.

Any insights? Thanks!

Answer by ikriz · Aug 22, 2013 at 09:48 AM

you probably need to do something with the cameras matrix

Thansk for your reply, but my bachelor thesis is over. I managed to finish this project and it works good.

I managed to do this by playing with the viewports, giving a specific area on the render to texture to each camera.

Yes of course, I also made a test game to play on it. Here is a test video I created in the CAVE area running real time using a razer Hydra as controller with it's motion sensor calibrated as a flashlight

Here is teh video: http://www.youtube.com/watch?v=xHdm1Z$$anonymous$$$$anonymous$$9lw

Would you $$anonymous$$d sharing how you were able to combined the cameras to one render-to-texture?

Well for every camera you choose the target render the texture as the same one. Can't remember if I did it through code or through the inspector, but certainly one of the two.

Aside from that you have to change I think the viewport it was, but something on the inspector visible for every camera, of every camera to only a width equal to 1 / (# of cameras on same render to texture). All starting with an offset equal to the end position of the previous camera.

Though once you put all the cameras on the render to texture, the difficult part was to calculate the correct Field of View of the cameras so that each camera exactly follows the one beside it, which depends on the amount of cameras used.

Hope this helps.

Wow, the answer is so simple! Viewports! I was messing with a lot of codes with no success...

Your answer

Follow this Question

Related Questions

How to make camera position relative to a specific target. 1 Answer

Arrangement of cubemap faces from RenderToCubemap 2 Answers

combined cameras 2 Answers

Need some help with portal rendering 2 Answers