- Home /

How can you detect judder in VR?

I've been working in OpenVR (the Steam API), and every once and a while I will get some judder. Is there a way to detect exactly what frames juddered/when juddering will occur? I'd like to minimize this, and I can change processing of my application dynamically based on how much judder might occur (to make things run faster to prevent judder), but I need some way of "profiling" the judder.

I'm in Unity, but I'm doing all my processing on the graphics card (using a Compute Shader), so the profiler says the framerate is very fast, yet sometimes judder occurs. I thought maybe taking the maximum frame rate over, say, 5000 frames might work, but sometimes judder occurs when the maximum hasn't changed. So is there a way I can actually measure it?

Answer by Dani Phye · Jun 09, 2016 at 02:51 AM

It turned out that Unity 5.4 has VRStats.gpuTimeLastFrame which was exactly what I was looking for.

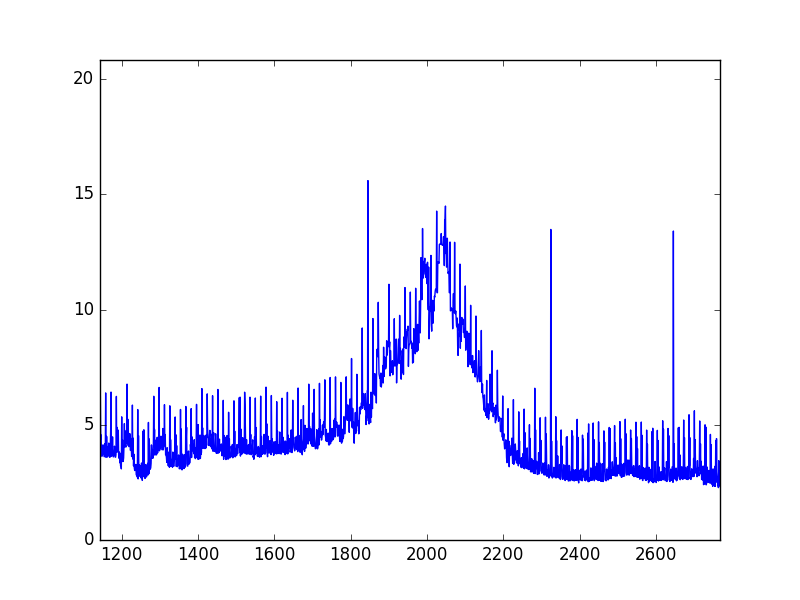

Edit: For those that are interested, it turns out that that "detecting spikes" method isn't actually relevant for detecting judder. Here is a plot to see why:

Where the x axis is frames, and the right axis is the ms value returned by VRStats.gpuTimeLastFrame. To generate this plot, I found somewhere that I had a very high amount of judder, and somewhere where I had no judder, and moved from the non-juddery region to the juddery region and back.

As you can see, the gpu times vary a ton, which means that measuring spikes will give you very little data about judder. Instead, judder occurs when there is simply a lower framerate. While it seems that judder is these "spikes" of lagging movement, it's actually consistent when you have a lower framerate and is happening even if your head is still, you just only experience it when your head is moving.

Thus, the best way to measure judder is simply to look at the fps (of sorts) of this gpu value, and then if it is above about 6 ms (this is something you can play with) you will most likely experience judder.

Answer by FortisVenaliter · Jun 08, 2016 at 09:00 PM

You mean jitter? Never heard it called judder before.

Your basic premise is correct, but 5000 frames at 60fps is nearly an hour and a half, so that might be too long. Any bumps would be averaged out. I would use 60 or 120 frames for averaging.

"Any bumps would be averaged out"

Actually I was just taking the maximum of all of those, not the average. But yes sorry it was a poor example, I was typically using about 100 frames. And okay, not sure where I heard that, but jitter probably makes more sense.

I found that what I needed to do was set VSync to Every Second V Blank and then my CPU wasn't getting ahead of the GPU anymore, and the performance of the GPU (which is what VR cares about) was reflected in the frame rate.

What I ended up doing was taking the maximum of 100 frames as my current "frame rate" to be adjusted for, and then setting that to the average of those frames*1.5, and then taking the max of that and the next 100 frames. This ensures that between the 100 frames you don't happen to run into one with a very small fps.

Also, I think what you meant was a $$anonymous$$ute and a half (about 83 seconds), but I still get your point.

Your answer

Follow this Question

Related Questions

Device.Present profiling 1 Answer

Is it possible to profile compute shaders? 2 Answers

Graphics and GPU Profiling 0 Answers

Reading Profiler Results 1 Answer

Realistic non flat wall in VR 0 Answers