- Home /

Rendering problems with Oculus Rift

Hey guys,

as part of my master thesis i have to read in point clouds taken from a laser scanner and make them visible in unity and in the oculus rift. I'm using unity 5.3.4f1 and the oculus rift dk2. The point clouds are saved as vertex in the meshes and I'm using the following shader in order to render them, so i can see the points as rectangles:

Shader "Custom/PointMeshSizeDX11Quad" { Properties { _Color ("ColorTint", Color) = (1,1,1,1) _Size ("Size", Range(0.001, 1)) = 0.03 }

SubShader

{

Pass

{

Tags { "RenderType"="Opaque" }

LOD 200

CGPROGRAM

#pragma vertex VS_Main

#pragma fragment FS_Main

#pragma geometry GS_Main

struct appdata

{

float4 vertex : POSITION;

float4 color : COLOR;

};

struct GS_INPUT

{

float4 pos : POSITION;

fixed4 color : COLOR;

};

struct FS_INPUT

{

float4 pos : POSITION;

fixed4 color : COLOR;

};

float _Size;

fixed4 _Color;

GS_INPUT VS_Main(appdata v)

{

GS_INPUT o = (GS_INPUT)0;

o.pos = v.vertex;

o.color = v.color;

return o;

}

[maxvertexcount(4)]

void GS_Main(point GS_INPUT p[1], inout TriangleStream<FS_INPUT> triStream)

{

float3 cameraUp = UNITY_MATRIX_IT_MV[1].xyz;

float3 cameraForward = _WorldSpaceCameraPos - p[0].pos;

float3 right = normalize(cross(cameraUp, cameraForward));

float4 v[4];

v[0] = float4(p[0].pos + _Size * right - _Size * cameraUp, 1.0f);

v[1] = float4(p[0].pos + _Size * right + _Size * cameraUp, 1.0f);

v[2] = float4(p[0].pos - _Size * right - _Size * cameraUp, 1.0f);

v[3] = float4(p[0].pos - _Size * right + _Size * cameraUp, 1.0f);

float4x4 vp = mul(UNITY_MATRIX_MVP, _World2Object);

FS_INPUT newVert;

newVert.pos = mul(vp, v[0]);

newVert.color = p[0].color;

triStream.Append(newVert);

newVert.pos = mul(vp, v[1]);

newVert.color = p[0].color;

triStream.Append(newVert);

newVert.pos = mul(vp, v[2]);

newVert.color = p[0].color;

triStream.Append(newVert);

newVert.pos = mul(vp, v[3]);

newVert.color = p[0].color;

triStream.Append(newVert);

}

fixed4 FS_Main(FS_INPUT input) : COLOR

{

return input.color*_Color;

}

ENDCG

}

}

}

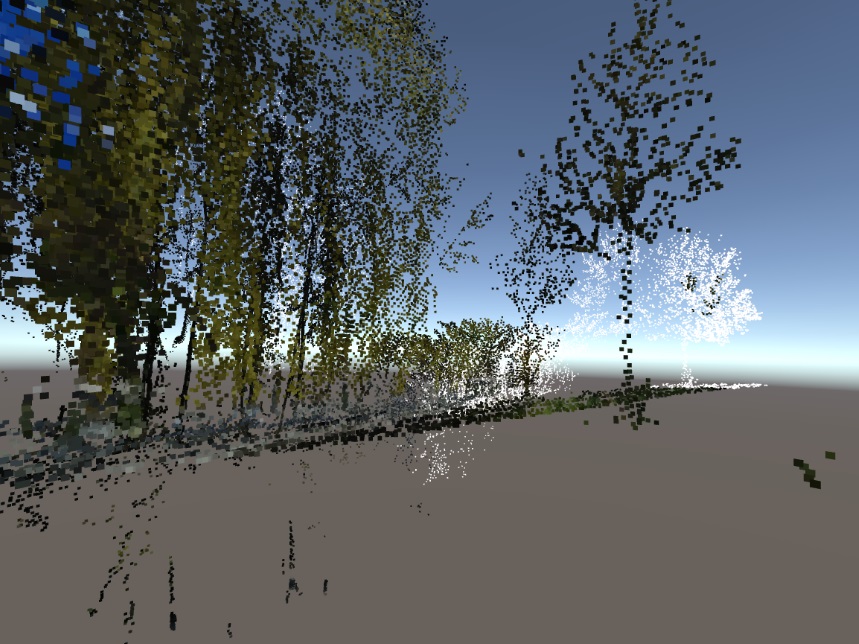

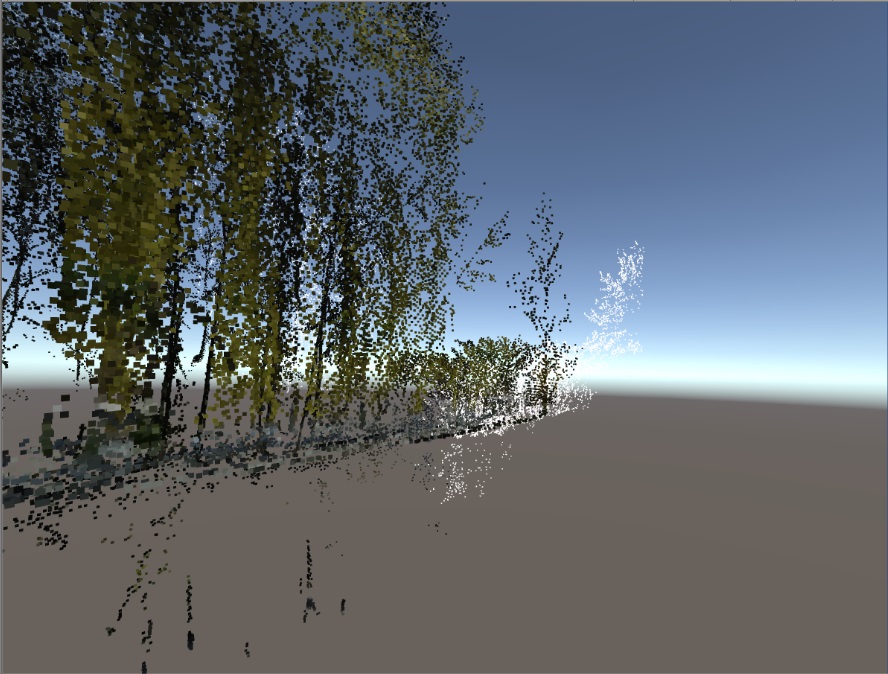

Everything is working fine so far, but the problem is, that the oculus rift isn't rendering gameobjects which aren't "in screen" completely and I don't know why. Below you can find two pictures, where i just moved my head slightly and some of the game objects just disappear. It also happens if I'm above the points and whole point cloud tiles are disappearing when i'm looking at them.

Any idea, why this is happening and where the problem could be? I'm dealing with this problem for a couple of weeks now and it's quite annoying, since i still have other stuff to deal with.

Thanks for your answers in advance!

Your answer

Follow this Question

Related Questions

HDRP + VR not working in 2020.1.0f1? 0 Answers

Sprite Mask Shader only renders on one Eye/Display (AR) 1 Answer

Unlit Outlined shader looks different on Mac & PC 0 Answers

PBR Standard Shader not Working Project Wide in Unity 5.3 0 Answers

URP Blit Render Feature not rendering in Single Pass Instanced VR 0 Answers