GPU Consumption Skyrockets when Oculus headset taken off, disconnected

Hi there,

I'm attempting to maintain a Unity experience that uses an Oculus Rift S headset. This experience runs on a dedicated Alienware PC (Nvidia 1080) in one location. This game sits dormant most of the day, and is only used occasionally. The display setup: there is an Oculus headset and a projector, which, when the headset is not used, displays a video (using ProAV), and when the game is getting played, mirrors what you see in the headset on the screen.

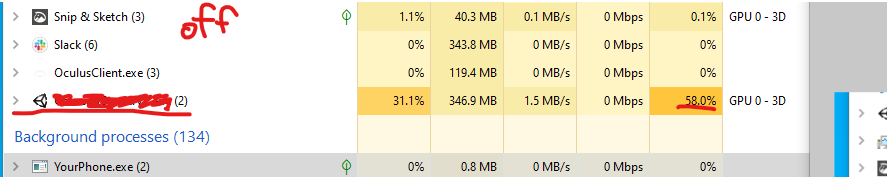

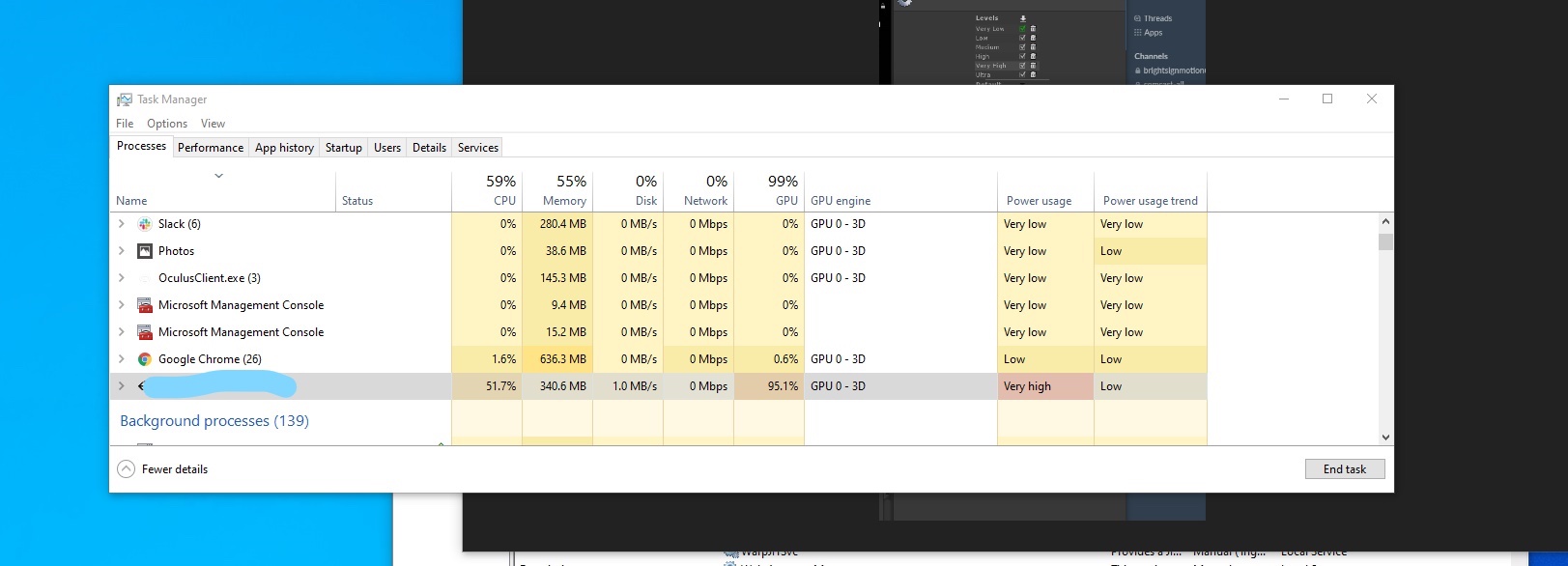

The game has been crashing more often lately. According to the logs, it's sometimes due to a ProAV error that the comp was out of resources - which seems weird, the computer has more than decent specs and nothing is happening in-game. the idle process does not seem to be expensive in any meaningful way - I believe this is a Unity Setting, Oculus Setting, Windows Setting or other setting at play. Game doesn't crash during gameplay, which has a couple very expensive processes. Turns out the build was running at around 60% GPU usage while idle. When I put the headset on, the GPU consumption dips (?!!) to 30 - 40%. Lastly, when the headset gets disconnected entirely, the GPU consumption skyrockets to 90-100%.

When I take the headset off, the VSync process spikes, crushing the framerate to 15fps or so. To be clear, this coincides with the GPU/CPU spike. Forums say Vsync/WaitforGPU is not a problem, but.... my error makes me feel otherwise. What I think is happening: Vsync/WaitForGPU is spiking CPU/GPU usage, and as things are... running, oddities, other Windows processes push the computer (in its elevated consumption state) over the edge and the game crashes. This never happened (afaik) before I boosted the quality settings.

Regarding the behavior when headset is disconnected... can I turn this behavior off somehow? Since the experience is in another place, I can't manually check the cables every day to see if they are seated properly into their ports.

I am actively reducing my quality settings, etc. to mitigate, but i still find the spike in GPU usage (and subsequent errors) somewhat disturbing. Is this known behavior for Vsync/waitforGPU? Whole thing seems incredibly weird.

Apologies if this is a commonly-known topic - I didn't see a ton of info in my searches. Maybe I'm digging in the wrong direction? I can give more info about the setup: Unity Version 2018.2, using NewtonVR, not using SteamVR, etc etc - let me know what you need to know to tell me what I need to know. :)

Thanks!!

Your answer

Follow this Question

Related Questions

Can I reset project settings or run in safe mode? 0 Answers

HTC Vive Pro crashes Unity, Steam VR reads "(unresponsive) Unity.exe" 0 Answers

While loop crashing all the time with breaks,While loop crashing constantly 0 Answers

Any drawbacks to using LOD groups? 1 Answer

Get movement direction according to look direction in VR? 0 Answers