- Home /

unexpected behaviour using WorldToViewportPoint

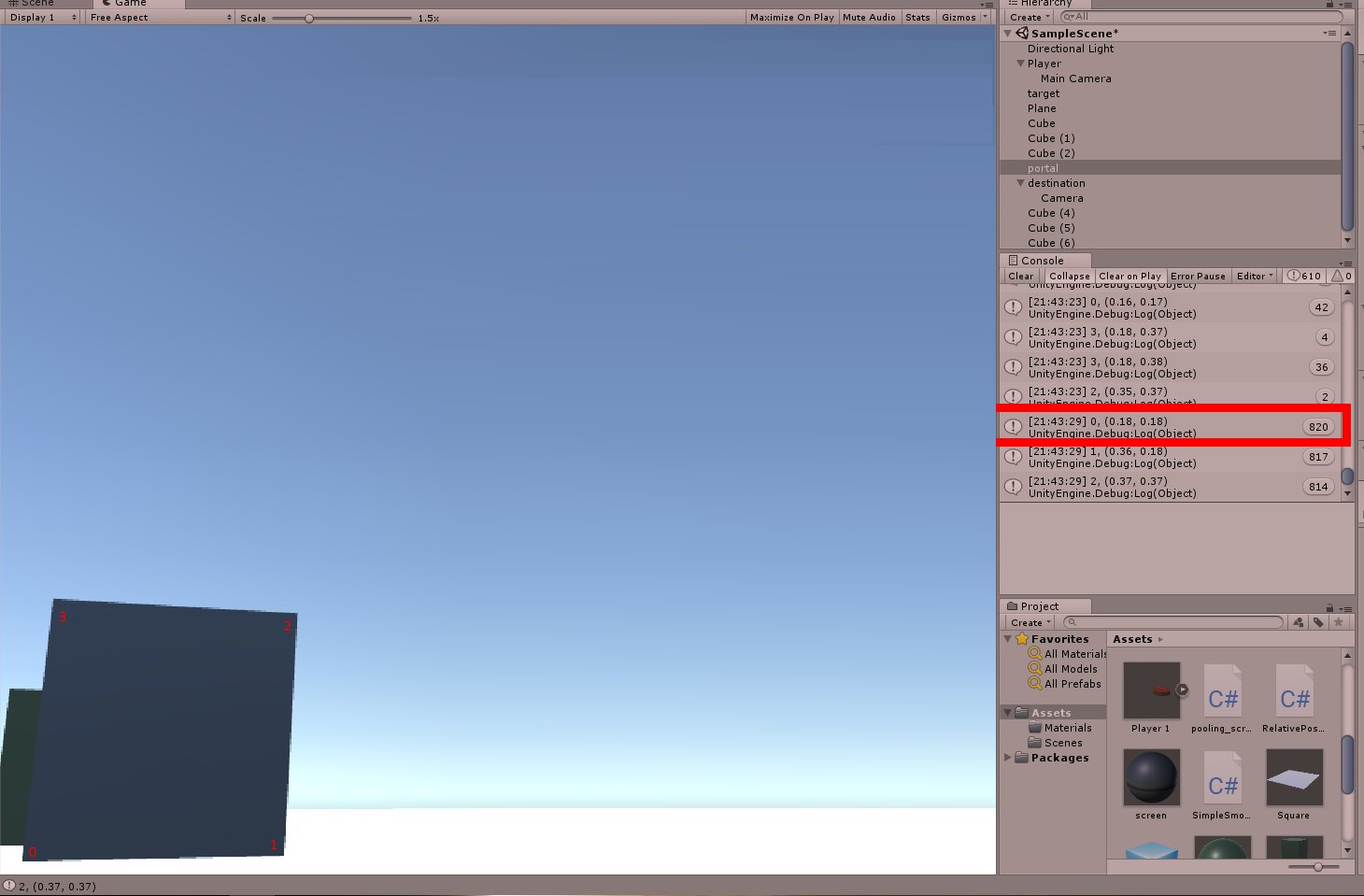

I am trying to get the normalized screen position of some vertices of a mesh using WorldToViewportPoint. However, I am running into an issue where the coordinates I find are different from the actual screen coordinates. An example can be seen in the image below:

The bottomright vertex is almost out of the screen, but the coordinates found are close to (0.2, 0.2) instead of (0, 0). The object to which the mesh is attached has an identity scale and rotation.

The code I use to create the mesh is as follows:

vertices = new Vector3[]

{

new Vector3(1.0f, -1.0f, 0.0f),

new Vector3(-1.0f, -1.0f, 0.0f),

new Vector3(-1.0f, 1.0f, 0.0f),

new Vector3(1.0f, 1.0f, 0)

};

triangles = new int[]

{

0, 2, 1,

3, 2, 0

};

mesh.Clear();

mesh.vertices = vertices;

mesh.triangles = triangles;

mesh.RecalculateNormals();

To calculate the coordinates in the viewport, I then use:

Vector2 normalCoordinates = targetCamera.WorldToViewportPoint(position + vertices[i])

Here, position is the position of the object.

Why are these coordinates found? Am I overlooking something? Did I misunderstand something? Thanks in advance.

Your answer

Follow this Question

Related Questions

WorldToViewportPoint and ViewportToWorldPoint math 2 Answers

Limiting player movement (Partially done) 1 Answer

ViewportToWorldPoint with multiple cameras 0 Answers

keep character on screen 0 Answers