- Home /

Obtaining relative speed of vertex in a shader

Hello everyone. I am trying to create a particular shader effect.

Basically, I want the emissivity of the vertex to scale with its relative speed with the camera.

Right now, I manage to scale the emissivity of the vertex with its distance from the camera with the following shader:

Shader "Unlit/EmissiveSpeed Shader" {

Properties{

_Color("Color", Color) = (1,1,1,1)

_MainTex("Albedo (RGB)", 2D) = "white" {}

_Dist("Distance", Float) = 1

_Glossiness("Smoothness", Range(0,1)) = 0.5

_Metallic("Metallic", Range(0,1)) = 0.0

_EmissionColor("Color", Color) = (0,0,0)

_EmissionMap("Emission", 2D) = "white" {}

_Emission("Bloom", Float) = 1

}

SubShader{

Tags{ "RenderType" = "Opaque" }

LOD 200

CGPROGRAM

// Physically based Standard lighting model, and enable shadows on all light types

#pragma surface surf Standard vertex:vert fullforwardshadows

//#pragma surface surf Lambert vertex:vert

// Use shader model 3.0 target, to get nicer looking lighting

#pragma target 3.0

sampler2D _MainTex;

sampler2D _EmissionMap;

half _Dist;

half _Emission;

half _Glossiness;

half _Metallic;

fixed4 _Color;

struct Input {

float2 uv_MainTex, uv_EmissionMap;

float3 viewDir;

half relativeSpeed;

};

void vert(inout appdata_full v, out Input o) {

UNITY_INITIALIZE_OUTPUT(Input, o);

half3 viewDirW = _WorldSpaceCameraPos - mul((half4x4)unity_ObjectToWorld, v.vertex);

half viewDist = length(viewDirW);

o.relativeSpeed = saturate(viewDist / 1000);

}

void surf(in Input IN, inout SurfaceOutputStandard o) {

fixed4 c = tex2D(_MainTex, IN.uv_MainTex) * _Color;

o.Albedo = c.rgb;

o.Metallic = _Metallic;

o.Smoothness = _Glossiness;

o.Emission = tex2D(_EmissionMap, IN.uv_EmissionMap) * _Color * _Emission * IN.relativeSpeed;

o.Alpha = c.a;

}

ENDCG

}

FallBack "Diffuse"

}

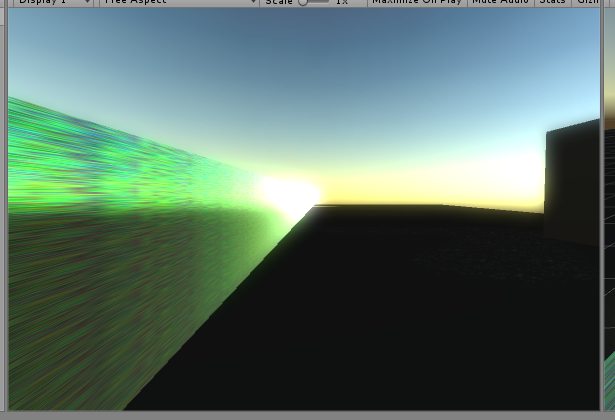

This creates the following, already kinda neat effect:

Now, my plan is to somehow store the vertex-camera distance for a frame, and use it to calculate the vertex-camera head-on relative speed on the next one, while also updating the vertex-camera distance.

Anyone has pointers on how I might do that? Or a better idea to achieve it altogether?

Answer by IgorAherne · Feb 10, 2017 at 12:15 PM

That's similar to how per-object motion blur is done.

You store the M (Model) matrix (16 floats) from the previous frame. You have 1 such a matrix per 1 mesh object. Model-matrix takes points from local space and transforms them into world space (relative to world's zero coord)

As you draw the mesh, as well as getting the usual vertex coordinate in MVP space, you also have two world-space positions: one using the old M and one using the current M-matrix.

Therefore, during the fragment stage you are able to see where the fragments were in the previous frame, and can calculate the difference vector. You then use the length (and perhaps the direction) as the coefficients to do whatever you need.

That's it.

Additionally, if you are doing any further post processing, you could store the result into a texture

You even could then pack the velocity and magnitude into a so-called "velocity-texture" which has RGB storing the packed direction and A storing the length of each fragment.

You do the packing like this (code is in GLSL coz I don't know CG):

float inv_max_length = 1 / max_allowed_length;

vec3 myWorldVec = vec3(4, -70, 40);

vec3 myWorldDir = norm(myWorldVec); //normalized direction in world space (0.05, -0.87, 0.5)

float myWorldVecLength = length(myWorldDir); //length (notice, it's larger than 1, won't fit into texture!)

notice, myWorldDir can be both negative and postive values (which don't go above |1|), but texture can only be between 0 and 1. Hence we need to do packing:

// multiplication is approximately 3 times quicker than division, hence we divided once, and are now just multiplying by inverse.

vec4 myPackedRGBA = vec4( myWorldVec.rgb * 0.5 + 0.5, myWorldVecLength * inv_max_length)

now, you can shove all the values into the texture, since components of myPackedRGBA are all between 0 and 1. You just need to reverse the process correctly, once you are in the fragment shader, to get back your values:

vec4 myPackedRGBA = tex( myPackedTexture, uv );

vec4 myUnpackedRGBA = vec4( myPackedRGBA.rgb * 2 - 1, //get back world direction (unit length)

myPackedRGBA.a / inv_max_length); //get back length of world direction.

The tricky part comes in when you want to constantly update the old-matrix for each object, I don't know how to do that efficiently in unity. The good news is that you only need one old M-matrix per object, and all of its vertices can use that single matrix. Remember that matrix is just 16 float values. You could in theory store several of such matrices in the texture or a buffer of some sort. Just make sure it's efficient, since it has to be written-to every frame.

Also, if you want the speed relative to camera, you use Model-View matrix instead of Model

A word of caution: make sure nothing happens to the alpha component (no alpha blending etc) and that the values are not f*ucked up by alpha blending.

Excellent answer! I understand much more clearly what I am trying to do now. I am an utter beginner with shader writing, so I have a few questions concerning the implementation.

So, how do I actually obtain the $$anonymous$$($$anonymous$$odel) or $$anonymous$$($$anonymous$$odel-View) matrix from the mesh? $$anonymous$$y guess is that a C# script on the object containing the mesh can obtain this $$anonymous$$atrix4x4 variable. $$anonymous$$aybe with something like GetComponent().mesh.$$anonymous$$odel$$anonymous$$atrix?

edit: more like GetComponent()localToWorld$$anonymous$$atrix; ?

Second, how do I pipe this $$anonymous$$atrix4x4 to my shader? Should I update a material parameter with something like this?

GetComponent<Renderer>().material.Set$$anonymous$$atrix ("_Position", pos$$anonymous$$atrix);

And finally, concerning the storing of the old position, can I simply have a

float4 _OldPos;

somewhere after CGPROGRA$$anonymous$$ and just update _OldPos = _Position after I have obtained the speed at the end of my fragment stage? Or should I have both the old and the present position as variables in my C# script, and I pipe both of them every frame?

yep, transform.localToWorld$$anonymous$$atrix is your $$anonymous$$-matrix.

Here is your local to camera matrix (if you require it). Notice the order of multiplication, matrices are multiplied from right-to-left, first local->world, then world->camera:

$$anonymous$$atrix4x4 $$anonymous$$Vmatrix = Camera.main.worldToCamera$$anonymous$$atrix * transform.localToWorld$$anonymous$$atrix

You should bind it to a uniform, which is single copy of a value that is read-only by the shaders which use it. As follows, uniforms save greatelly on memory because there is only 1 instance per all vertex / fragment invocations (unlike with input structures, where there is a separate copy for each vertex / fragment invocation).

float4 _OldPos should be a uniform as well, but it will have to be supplied every frame. I know that in OpenGL you could use buffers, so that it just sits in there on the GPU, but in Unity I don't know how to do it.

$$anonymous$$eep in $$anonymous$$d that unity is sending lots uniforms every frame anyway ($$anonymous$$VP matrices, etc), so a couple of uniforms won't hurt

You can find more about uniforms here . About how to bind them in unity I don't know, you will have to research on that specifically. Probably through materials, similar to how we set colors, etc.

Hope this helps

And here is the C# script:

using System;

using UnityEngine;

[ExecuteInEdit$$anonymous$$ode]

public class RedBlueShift : $$anonymous$$onoBehaviour

{

private $$anonymous$$atrix4x4 oldWorld$$anonymous$$atrix, world$$anonymous$$atrix;

private $$anonymous$$eshRenderer meshRender;

private $$anonymous$$esh mesh;

void Start()

{

meshRender = GetComponent<$$anonymous$$eshRenderer>();

mesh = GetComponent<$$anonymous$$esh>();

oldWorld$$anonymous$$atrix = $$anonymous$$atrix4x4.zero;

world$$anonymous$$atrix = $$anonymous$$atrix4x4.zero;

}

void Update()

{

meshRender.shared$$anonymous$$aterial.Set$$anonymous$$atrix("_OldWorld$$anonymous$$atrix", oldWorld$$anonymous$$atrix);

world$$anonymous$$atrix = Camera.main.worldToCamera$$anonymous$$atrix * transform.localToWorld$$anonymous$$atrix;

meshRender.shared$$anonymous$$aterial.Set$$anonymous$$atrix("_World$$anonymous$$atrix", world$$anonymous$$atrix);

oldWorld$$anonymous$$atrix = world$$anonymous$$atrix;

}

}

Now, only a few tweaks to hammer out. The main one is that the angular velocity of the camera seems to affect the glowing. EG: https://webmshare.com/aDeG7

As you can see in the following line, I use a dot product between the velocity vector of the vert and the vert-camera vector to obtain the scalar of the speed that is purely directly towards or away from the camera. half viewSpeed = dot(speedVec, _WorldSpaceCameraPos - newPos);

As such, rotating the camera without translation shouldn't be affecting this quantity. Is there something obvious I am missing?

The second problem is the slight flickering in the glow levels, but I think that can be used by using a lerp in the C# script.

If you don't really know, it's fine, you've been a huge help!

I only want the shader to use the position of the camera, I am not sure what camera$$anonymous$$atrix contains, but it is probably superfluous information for this specifically

Contains same information as any local matrix of any object

Camera matrix allows you "empersonate" the camera object, and specify any coordinate relative to it. For example, if I stand nearby you but look in a different direction, both of us have a "front" direction. But it's different for each one of us.

I will have my own matrix, and you will have your own matrix.

After the coordinates are specified relative to the world center, we can take them and re-specify them relative to me, by transfor$$anonymous$$g them with my own matrix. Now it will seem as if I am the zero-cordinate, and the coordinates are all specified relative to my forward vector.

If we were ins$$anonymous$$d to take world coordinates and specify them relative to you, we would see a different pattern of coordinates.

It would be totally different to the pattern of coordinates that I saw, but the new pattern would now describe what you see with your eyes.

That's because these coordinates now treat you as the zero-coordinate, and adhear to your forwards vector (and with it, to your coordinate system, which is local to you)

So View-matrix is nothing but merely another "local" matrix, just of an entity to which we reffer as "camera"

For that matter, world matrix is nothing but a "local" matrix, just of an entity to which we refer as "World"

:D

Yes, you could compare world positions, by finding the displacement relative to that God-like object called "World". In the same way you could compute displacement relative to camera itself, using the View-matrix, if ever needed.

Note that you shouldn't go $$anonymous$$yObject's localspace --> CameraObject's localspace. You still need to go through the world to arrive into the camera:

$$anonymous$$yObject's localspace -->(Apply $$anonymous$$odel $$anonymous$$atrix) World object's localspace --> (Apply View $$anonymous$$atrix) CameraObject's localspace

Your answer