- Home /

How to calculate UV coordinates of the outer edge of a texture mask?

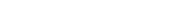

I'm trying to write a shader, that will increase the normal weight of the UV along an array of rays, V = mU + B. From (0,0) to (0,128) or rather between the max upper and lower corners of my texture file (128 x 128). I also want U to increase at the rate: _momentum, and the active (U,V) of each ray to have a normal weight of .2, for the active moment and all other points to have a weight of 0. I'm not familiar with manipulating normals in shaders, but perhaps in c# by using an alpha gradient prefab

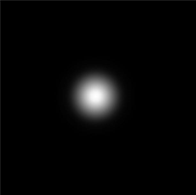

I'm trying to write a shader, that will increase the normal weight of the UV along an array of rays, V = mU + B. From (0,0) to (0,128) or rather between the max upper and lower corners of my texture file (128 x 128). I also want U to increase at the rate: _momentum, and the active (U,V) of each ray to have a normal weight of .2, for the active moment and all other points to have a weight of 0. I'm not familiar with manipulating normals in shaders, but perhaps in c# by using an alpha gradient prefab  Could my shader instantiate this alpha mask prefab at the active endpoint of each ray? At this point the texture will appear to have a wave rolling across. But I want to also include a mask, so that if any of my rays meet the green mask, they will halt and even perform other behaviors, originating from that location.

Could my shader instantiate this alpha mask prefab at the active endpoint of each ray? At this point the texture will appear to have a wave rolling across. But I want to also include a mask, so that if any of my rays meet the green mask, they will halt and even perform other behaviors, originating from that location.  Point A represents the greatest value of b from my equation, and point B the lowest value of b. By pulling these coordinates I could know the range of (blue) rays that would collide, and then for each point along those rays that collide with the mask, I would be able to send a backwards casting ray with a _degrademomentum and a negative normal weight/inverted alpha prefab. Possibly even increasing the weight value of the normals as those specific rays approach their endpoint as well.

Point A represents the greatest value of b from my equation, and point B the lowest value of b. By pulling these coordinates I could know the range of (blue) rays that would collide, and then for each point along those rays that collide with the mask, I would be able to send a backwards casting ray with a _degrademomentum and a negative normal weight/inverted alpha prefab. Possibly even increasing the weight value of the normals as those specific rays approach their endpoint as well.

What I am most importantly seeking is if I can send raycasts on a 2D plane/texture and store the UVpoints where they meet the mask, and if they can alter the texture's normals along each line in relation to time.

The reason I don't just tile the prefab and translate it across, is because I want to use the information on pulling the edge coordinates to develop other equations for the remainder of the rays to perform. For instance upon reaching U = 99, the ray would carry momentum in another direction. Such as backwards, while the other rays remain unchanged.

Looking at this shader, I might be able to achieve what I want, only I would somehow need to replace the box with my mask, and still somehow find the coordinates of the border between empty space and filled space. https://github.com/gillesferrand/Unity-RayTracing/blob/master/Assets/RayCaster.shader

using UnityEngine; using System.Collections;

[RequireComponent (typeof($$anonymous$$eshFilter), typeof($$anonymous$$eshRenderer))] public class ExampleVerts : $$anonymous$$onoBehaviour { public int xSize, ySize; private $$anonymous$$esh mesh;

private void Awake ()

{

Generate ();

}

private Vector3[] vertices;

private void Generate ()

{

//Building the $$anonymous$$esh

GetComponent<$$anonymous$$eshFilter> ().mesh = mesh = new $$anonymous$$esh ();

mesh.name = "Procedural Grid";

vertices = new Vector3[(xSize + 1) * (ySize + 1)];

Vector2[] uv = new Vector2[vertices.Length]; //this gives vertices UV information

Vector4[] tangents = new Vector4[vertices.Length]; //Tangents for bump mapping

Vector4 tangent = new Vector4 (1f, 0f, 0f, -1f); //^

//double loop to position vertices

for (int i = 0, y = 0; y <= ySize; y++) {

for (int x = 0; x <= xSize; x++, i++) {

vertices [i] = new Vector3 (x, y);

uv [i] = new Vector2 ((float)x / xSize, (float)y / ySize);

tangents [i] = tangent;

}

}

mesh.vertices = vertices;

mesh.uv = uv;

//giving the meshfilter triangles

int[] triangles = new int[xSize * ySize * 6];

for (int ti = 0, vi = 0, y = 0; y < ySize; y++, vi++) {

for (int x = 0; x < xSize; x++, ti += 6, vi++) {

triangles [ti] = vi;

//triangles[1] = 1; //xSize + 1 to flip normal // these were transfered, since the triangles share vertices

//triangles[2] = xSize + 1; //1 ^

triangles [ti + 3] = triangles [ti + 2] = vi + 1;

triangles [ti + 4] = triangles [ti + 1] = vi + xSize + 1;

triangles [ti + 5] = vi + xSize + 2;

}

}

mesh.triangles = triangles;

mesh.RecalculateNormals ();

StartCoroutine (OceanWake ());

}

public Layer$$anonymous$$ask layermask;

//gizmos visualize vertices

private void OnDrawGizmos ()

{

if (vertices == null) {

return;

}

Gizmos.color = Color.white;

for (int i = 0; i < vertices.Length; i++) {

Vector3 _i = new Vector3 (0, vertices [i].y);

Gizmos.DrawSphere (_i, 0.1f);

RaycastHit hit;

if(Physics.Raycast (vertices [i], Vector3.right, out hit, ySize + 1, layermask)){

Gizmos.DrawSphere (hit.point, 0.1f);

//Debug.Log (hit.point);

}

}

}

private int _b;

public float _rate;

[Range (0, 20)]

public int _rate2;

private bool shoreline_stored = false;

private Vector2[] pixels;

private Vector2 shoreline;

IEnumerator OceanWake ()

{

while (true) {//replace 'true' with future raycast boolean

Texture2D texture = new Texture2D (128, 128);

GetComponent<Renderer> ().material.SetTexture ("_DispTex", texture);

int _b = $$anonymous$$athf.RoundToInt (_rate * Time.time);

pixels = new Vector2[(texture.width) * (texture.height)];

for (int i = 0, y = 0; y < texture.height; y++) { //y<texture.height is the max value for int y in this case 128

for (int x = 0; x < texture.width; x++, i++) {

pixels [i] = new Vector2 (x, y);

Color color = ((x & y) != 0 ? Color.white : Color.black);

//texture.SetPixel (_b, y, color); //for single line

if (!shoreline_stored) {

RaycastHit2D hit = Physics2D.Raycast (new Vector2 (0, pixels [i].y), Vector2.right, texture.width, layermask, -0.5f, 0.5f);

if (hit.collider != null) {

hit.point = shoreline;

Debug.Log (shoreline);

shoreline_stored = true;

}

}

for (int _mb = _b - _rate2; _mb < _b + _rate2; _mb++) {

if (y == shoreline.y) {

for (int shorelim = _b + _mb; shorelim < shoreline.x; shorelim++) {

texture.SetPixel (shorelim, $$anonymous$$athf.RoundToInt(shoreline.y), color);

}

} else {

texture.SetPixel ((_b + _mb), y, color); //for a scalable range

if (x != _b + _mb) {

int _p = x;

texture.SetPixel (_p, y, Color.black);

}

}

}

}

}

texture.Apply ();

yield return null;

}

}

}

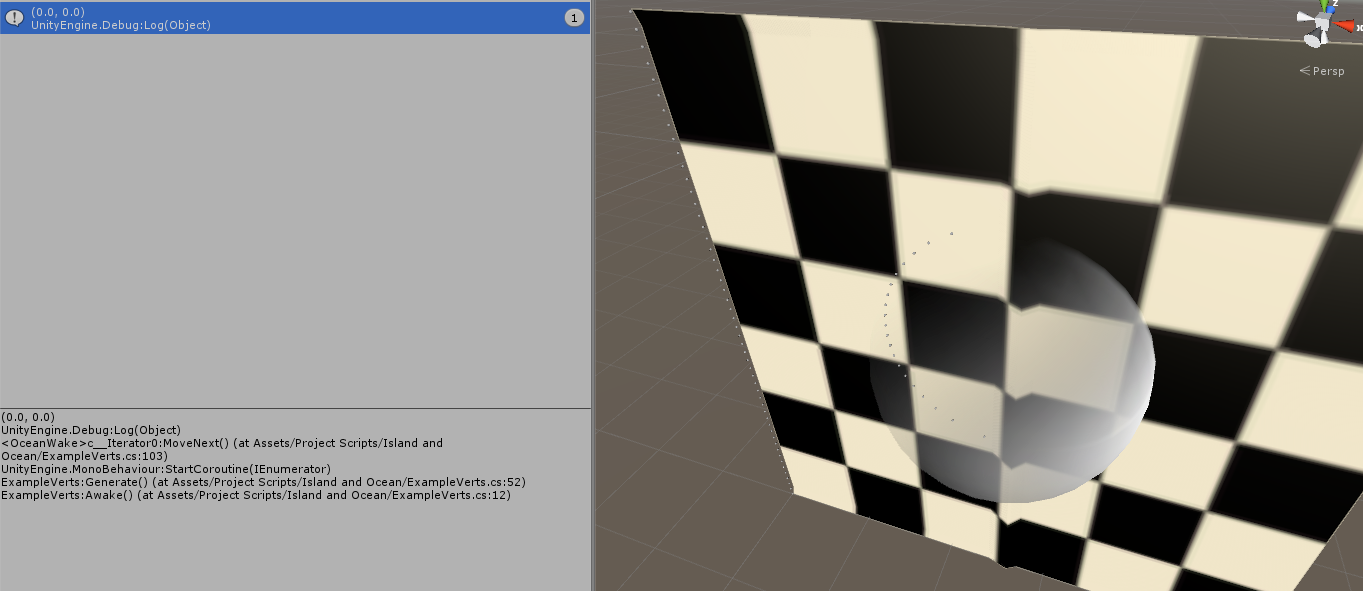

This is what I have so far.

Ins$$anonymous$$d of tracking (u,v)'s, I use a black distortion map and update each pixel with white, for my equations. It works with step one, sending the horizontal wave across the plane, but it's pooping at the collider phase.

Using both a 3D collider (to visualize the gizmos) and a 2D circle collider for the actual OceanWake()  Despite using the same technique to store the hitpoints of my 3D raycasts, my 2D raycasts do not store. But my console still logs (0,0) (the bottom left of the plane) as the only shoreline hitpoint. 1) there are no colliders in that spot 2) If I remove the shoreline_stored boolean, it does the same except it will overload my system with (0,0) logs.

Despite using the same technique to store the hitpoints of my 3D raycasts, my 2D raycasts do not store. But my console still logs (0,0) (the bottom left of the plane) as the only shoreline hitpoint. 1) there are no colliders in that spot 2) If I remove the shoreline_stored boolean, it does the same except it will overload my system with (0,0) logs.

Your answer