- Home /

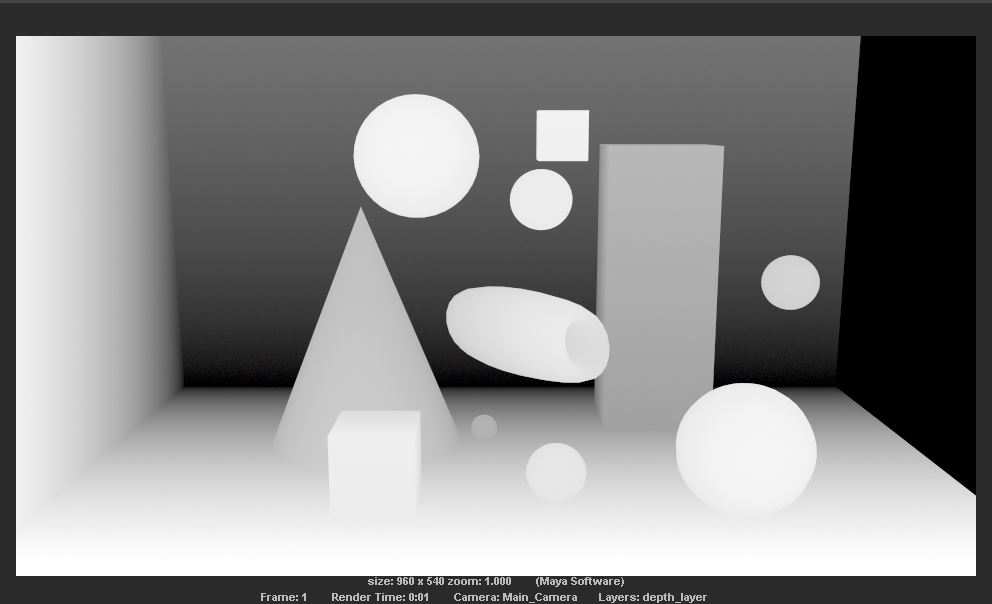

Depth values from a scene

Hi!

I have created a depth map in Maya 2016 from a certain scene. I want to get the distance values of the pixels from the scene and use it in Unity to create another scene, knowing those values. I am not sure, if I should work with it only in Unity, or get help from Maya's renderer.

How can I get the depth values? Thanks a lot!

Mark

Is this an exercise in computer vision? Try to build a set of shapes from a depth map? If so, that's pretty darn cool! If not, why mess with the depth-map, can't you just pull those objects into unity as 3D-meshes? (not familiar enough with $$anonymous$$aya to know, but I thought I remember seeing/reading about that as a feature.) Question about the depth map image: Is the rear wall tilted towards the camera? It looks like the whole wall is at the same distance/depth, and so shouldn't it be a solid color, rather than a gradient?

Is Computer Graphics. $$anonymous$$ainly this is what I have to do for my current academic project: I have a scene. I have to make a depth map of that scene, to get all the values of the distance of each pixel. $$anonymous$$nowing that, I have to re-project the pixels at that distance with their previous color (from the scene), inside Unity. Now, I thought of two ways: one involves that I do everything in Unity, but I can't seem to find much about this subject; second, to use $$anonymous$$aya to get the depth values and then come back to Unity to create the scene, with those values.

Thx for the reply :)

PS. I'm new to CG :(

Answer by Glurth · Oct 27, 2016 at 04:08 PM

Cool Project!

Since I don't know Maya that well, and it might help, here is how I would do that entirely in unity:

I would use RAYCASTING to generate the depth-map: (https://docs.unity3d.com/ScriptReference/Physics.Raycast.html) in the direction the scene camera is pointing, to determine the distance from the camera plane to the closest mesh object. This would be done in a nested loop to raycast from a plane of points. I would use WorldToScreenPoint to find the detected scene point's screen position pixel, and get it's color. https://docs.unity3d.com/ScriptReference/Camera.WorldToScreenPoint.html , https://docs.unity3d.com/ScriptReference/Texture2D.GetPixel.html

I would then use this gathered data to create a custom output MESH: (https://docs.unity3d.com/ScriptReference/Mesh.html) Starting with a simple x/y-plane mesh, I would deform each vertex's z-coordinate value by the depth-map value of it's rayast. Rather than use the UV values of the mesh to reference a texture, I would simply the process a bit by using a vertex-shader to display the mesh. This allow us to colorize each vertex of the mesh with the color detect at the end of the raycast (https://docs.unity3d.com/ScriptReference/Mesh-colors.html).

Since the result is a single mesh, I would be interested to see the imperfect results as the resolution of the output mesh (and number of raycasts) changes. Best results would be, I suspect, 1 raycast per pixel of the original scene image.

Answer by mircbone · Oct 27, 2016 at 06:48 PM

Thanks for the reply!

The thing is, my input is pictures that I can populate a skybox with. That means either I bring in Unity the 6 pictures with their 6 depth maps and I work from here somehow or I have to make depth maps from these 6 pictures directly in Unity if there is a way.

Your answer

Follow this Question

Related Questions

Intersection Highlight Shader -1 Answers

re-use depth for shadows on stereo render 0 Answers

Homing Missile, get two different targets? 0 Answers

Sorting Javascript array by Distance 0 Answers

Move an object to a specific distance 0 Answers