- Home /

Can a shader be used to not render parts of a skinned mesh?

I have several high poly, skinned meshes that move around the scene while animating. Often, one mesh intersects another and they move together at the same speed in the same direction. For performance reasons I would like the surface of the mesh that is inside (and therefore not visible to the camera) to not be rendered. Because the meshes move around the scene uncontrolled, the meshes can intersect in any number of ways. Also since it is a single mesh I cannot deactivate it or turn off its renderer, since a part of the object may still be visible.

Is it possible for a shader to know (based on depth perhaps) which parts of its surface have been occluded by another object and as a result stop rendering those parts of its surface?

Assu$$anonymous$$g your shaders write to/test the depth buffer (i.e. ZWrite On and ZTest LEqual, which are default for any non-transparent shaders) then the behaviour you describe already happens automatically - when a candidate pixel is about to be rendered, if there is already a pixel in the depth buffer that is closer to the camera then the candidate will be culled. Unless I've misunderstood your question, in which case perhaps a screenshot would help of what you're trying to describe..

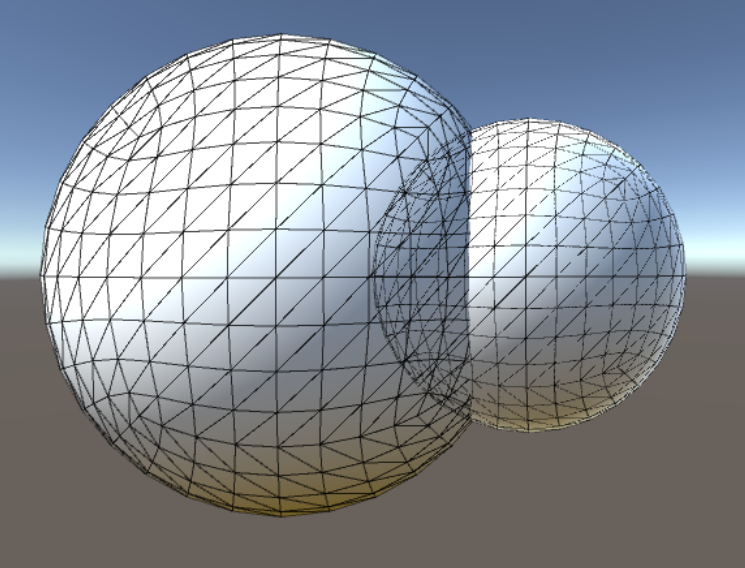

Ok I've added a screenshot. What I need is for the part of the small sphere that is inside the large sphere to not be rendered. This is similar to a boolean operation except the mesh itself would not be changed, just the intersecting polygons would not be rendered. Is this more clear?

To the best of my knowledge, no. You cannot use a shader for the purpose you described. A shader is a program that ultimately calculates the color of a pixel of an object it is assigned to. It works on the object's level (and various parts of the shader program work on vertex level and pixel level) and cannot have any knowledge of any nearby objects of their surface properties.

Like tanoshimi said, the depth check happens automatically anyway, but if the background object gets rendered first, and then foreground object draws over it, then yeah you're dealing with "overdraw", essentially you're wasting time by drawing the background object.

$$anonymous$$ost 3D Engines, Unity included, do their best to overcome this problem by drawing closest objects first. But long story short, no there really isn't anything you can do about it in your particular example.

In fact you'd probably spend more CPU or GPU time figuring out what's occluded/clipped and discarding those surfaces, then simply rendering everything without such conditions.

If your currently struggling with performance, you're looking at the wrong solutions. Reduce the number of objects on scene, or use simpler shaders to draw them, use static occluders and Occlusion bake as much as possible. If you're using multiple dynamic lights, use deferred renderer. If not, use forward renderer and bake all non-dynamic lights in. If you have any custom written shaders, give them a once-over against known shader optimization guides to make sure they're not running slower than they should.

@Eudaimonium , ok how about if ins$$anonymous$$d of trying to figure out exactly what is intersecting what, I only use a texture. So for example all of the black parts of the texture would not get rendered but the white parts would get rendered. This is similar to an alpha texture, but it would not make the surface only transparent, it would not render those polygons.

And how do you intent to figure out which texture parts are white and which are black?

From where does this texture come from? How will you render out this texture? One for each character? How many render targets will that come down to?

How would you "not render" what's on the black texture?

I think you're completely going about it the wrong way. There is no reliable way to skip rendering of clipped parts of animated mesh, at least not in the performance preserving reasons. Focus your optimizations on important stuff. I named a few up there.

I can use a set of premade textures, and then switch between them at runtime under certain conditions, that part I can work out. What I'm not sure of is how the shader would interpret the texture and render only the white parts of the surface, culling the black.

Your answer